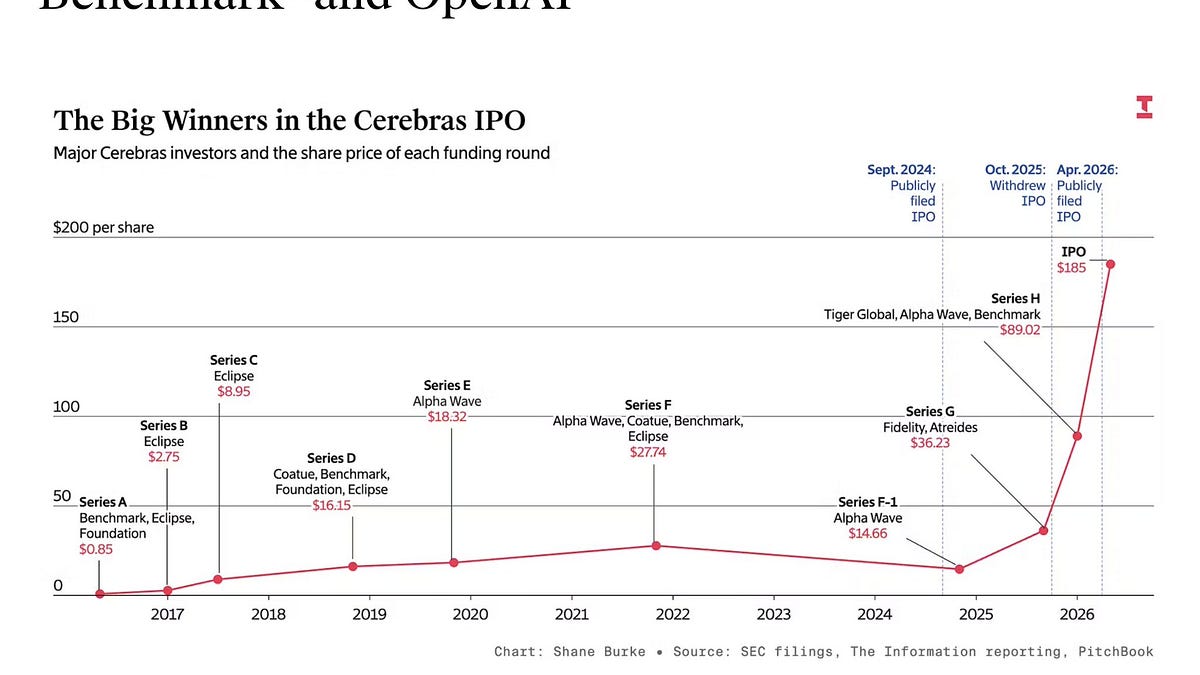

Cerebras Systems, the AI chip company now valued at $60 billion, nearly collapsed in its early days while burning $8 million per month. The startup, founded by a team that previously sold SeaMicro to AMD for $334 million in 2012, faced existential cash-flow pressure before its wafer-scale engine technology found a market in hyperscale AI training. Andrew Feldman, Gary Lauterbach, Michael James, Sean Lie, and Jean-Philippe Fricker, the core founding team, bet the company on a radical architectural bet: building the largest single chip in the semiconductor industry. That bet has now paid off with a valuation that places Cerebras among the most valuable private AI hardware companies globally. The survival story matters now because it illustrates how deep-tech startups with high capital intensity can navigate the valley of death to capture a slice of the AI infrastructure boom, a dynamic that investors and hyperscalers are watching closely as the next wave of chip startups seeks funding.

The Wafer-Scale Bet That Nearly Broke the Company

Cerebras' core technical gamble was the wafer-scale engine, a single silicon wafer cut into one continuous piece rather than diced into individual chips. This design eliminated the performance penalties of inter-chip communication, a bottleneck that plagued traditional GPU clusters for AI workloads. The engineering challenge was immense: manufacturing yields on a single wafer-scale chip are notoriously low, and the thermal and power management problems are unprecedented in the industry. The company burned through $8 million per month during its development phase, a burn rate that would have consumed most venture capital rounds within 18 months. The founding team, veterans of SeaMicro's low-power server architecture, understood the physics but underestimated the manufacturing complexity. The first-generation wafer-scale engine required custom packaging, specialized cooling systems, and a complete rethinking of how to test and validate a chip that was physically larger than any reticle-limited design. This technical debt translated directly into cash burn, as each failed wafer run cost millions and delayed revenue generation by quarters. The team had to solve thermal expansion mismatches between the silicon wafer and the packaging substrate, a problem that caused early prototypes to crack under load.

The thermal management solution that eventually allowed Cerebras to move from prototype to production involved a custom cooling architecture that circulated coolant directly over the silicon surface, maintaining uniform temperature distribution across the die. The engineering team spent over a year on this cooling system alone, a development cost that compounded the monthly burn. Once yield and thermal problems were sufficiently controlled, Cerebras shipped its first Wafer-Scale Engine to customers in 2019, generating initial revenue that extended the company's runway and demonstrated commercial viability. The first-generation WSE contained 1.2 trillion transistors and 400,000 AI-optimized cores, a specification that dwarfed any GPU available at the time. The second-generation WSE-2, announced in 2021, scaled to 2.6 trillion transistors, validating the architectural roadmap and attracting the investor attention that ultimately underpinned the $60 billion valuation.

Where the $8M Monthly Burn Went

The $8 million monthly burn rate broke down into three primary cost centers: wafer fabrication, packaging and assembly, and engineering headcount. Wafer-scale chips require advanced process nodes at foundries like TSMC, where a single 300mm wafer run for a 5nm or 3nm design costs $30,000 to $50,000. Cerebras needed multiple runs per month to iterate on design flaws and improve yield. The custom interposer and packaging solution, necessary to connect the wafer-scale die to power delivery and data I/O, added another $2 million per month in non-recurring engineering costs. The engineering team, which grew from 50 to over 300 people during the development phase, consumed $3 million per month in salaries and benefits for a headcount that tripled over the development cycle. The company had no revenue during this period, meaning every dollar came from venture capital. The $334 million SeaMicro exit gave the founding team credibility with investors, but it did not guarantee follow-on funding. By mid-2018, Cerebras had raised just over $100 million from investors including Benchmark Capital and Sequoia Capital, but the burn rate implied a runway of just over 12 months. The company also spent heavily on specialized test equipment, as no off-the-shelf tester could handle a chip the size of a dinner plate.

AMD, SeaMicro, and the Competitive Reshuffle

The Cerebras founding team's prior exit to AMD for $334 million in 2012 created a unique competitive dynamic. AMD acquired SeaMicro to enter the low-power server market, a strategy that ultimately failed as the company pivoted toward high-performance computing and GPU architecture. The SeaMicro acquisition gave AMD a foothold in dense server design, but the team's departure to found Cerebras left AMD without the talent to execute on that vision. Today, AMD competes directly with Cerebras in the AI accelerator market through its Instinct GPU line, but the two companies take fundamentally different architectural approaches. AMD relies on chiplets and interconnect standards like Infinity Fabric, while Cerebras doubles down on monolithic wafer-scale integration. The competitive landscape also includes Nvidia, which dominates the AI training market with its GPU clusters, and startups like Groq and SambaNova that target inference workloads. Cerebras has carved out a niche in training large language models that require massive memory bandwidth and low latency, a segment where its wafer-scale architecture provides a 10x performance advantage over GPU clusters for specific model architectures. This performance edge comes from the wafer-scale engine's ability to keep all compute elements fed with data simultaneously, eliminating the memory bottlenecks that slow down GPU clusters.

Downstream Effects on Hyperscalers and Fabs

Cerebras' survival and growth has direct implications for the semiconductor supply chain and hyperscale cloud providers. The company's wafer-scale engines require dedicated manufacturing capacity at TSMC, where the advanced process nodes are already in high demand from Apple, Nvidia, and AMD. Each Cerebras wafer consumes roughly 10 times the silicon area of a typical GPU die, meaning the company must secure significant allocation from TSMC's limited capacity. This creates a secondary effect: as Cerebras scales, it competes directly with Nvidia and AMD for wafer starts at TSMC's 5nm and 3nm fabs. The hyperscalers, Amazon Web Services, Microsoft Azure, and Google Cloud, are watching this dynamic closely because they need alternative AI chip suppliers to reduce dependence on Nvidia. Cerebras has already deployed its systems at cloud providers and enterprise customers, offering a differentiated value proposition for workloads that benefit from its memory architecture. The company's survival also validates the wafer-scale approach for other chip startups, already drawing new capital to similar architectural bets across the AI hardware sector. For TSMC, accommodating Cerebras means reserving capacity that would otherwise flow to high-volume customers, a trade-off that becomes more acute as AI demand grows.

The downstream implication for chip design houses extends across the entire fabless ecosystem. Every semiconductor company that relies on TSMC faces the same allocation tension: high-volume, predictable revenue from Apple and Nvidia versus smaller, irregular orders from AI accelerator startups. TSMC has begun reserving dedicated capacity blocks for AI compute companies as part of government-backed diversification programs, a shift that benefits Cerebras but increases allocation uncertainty for legacy chip vendors. For the hyperscalers, the Cerebras deployment model, where the company sells integrated systems rather than bare silicon, creates a procurement relationship distinct from buying GPUs. This systems-level approach requires Cerebras to own more of the supply chain, including packaging and integration, which raises its capital requirements but drives higher gross margins per accelerator delivered. The long-term implication is a bifurcated AI hardware market: commodity GPU clusters for general-purpose training and specialized accelerator systems for workload-specific efficiency gains.

The Policy Signal in Cerebras' $60B Valuation

Cerebras' $60 billion valuation sends a clear signal to the semiconductor industry and its regulators: the market believes that architectural innovation in AI hardware can generate outsized returns, even in a market dominated by Nvidia. This valuation, achieved without the revenue scale of established players, reflects investor conviction that the AI infrastructure buildout will require multiple chip architectures to meet diverse workload requirements. The valuation also carries implications for export controls and national security policy. Wafer-scale chips with high compute density fall under the same export restrictions as advanced GPUs, meaning Cerebras must navigate the same regulatory landscape that constrains Nvidia's sales to China. The company's ability to achieve a $60 billion valuation despite these headwinds suggests that the market sees AI chip demand as structurally under-supplied for the next decade. For regulators, the Cerebras story reinforces the strategic importance of domestic advanced semiconductor manufacturing capacity, as wafer-scale chips require the most advanced foundry nodes that are concentrated in Taiwan and South Korea. The valuation also pressures policymakers to accelerate funding for domestic fabs, since any disruption to TSMC's output would directly threaten Cerebras' production pipeline.

The trajectory from near-death to $60 billion valuation positions Cerebras as a bellwether for the next generation of AI hardware startups. The company must now prove it can scale from niche success to mainstream adoption, a transition that will require navigating manufacturing constraints, competitive pressure from Nvidia's next-generation architectures, and the capital intensity of building a direct sales force and support organization. The founding team's experience at SeaMicro taught them how to exit a company, but Cerebras faces a different challenge: building an independent, profitable business that can sustain its valuation without being acquired. The wafer-scale architecture gives them a moat, but the moat is only as deep as the company's ability to keep iterating on process technology and software tooling. Investors will watch the next 18 months closely, as Cerebras must convert its valuation into revenue growth that justifies the multiple. If the company succeeds, it will validate the thesis that radical architectural bets can disrupt even the most entrenched semiconductor markets. If it stumbles, the $8 million monthly burn rate that nearly killed the company will look like a warning sign for the entire AI chip startup ecosystem.

The BossBlog Daily

Essential insights on AI, Finance, and Tech. Delivered every morning. No noise.

Unsubscribe anytime. No spam.

Tools mentioned

AffiliateSelected partner tools related to this topic.

AI Copilot Suite

Content drafting, summarization, and workflow automation.

Try AI Copilot →

AI Model Monitoring

Track model quality, latency, and drift with alerts.

View Monitoring Tool →

Low-fee Global Broker

Multi-market access with transparent pricing.

Open Broker Account →

Some links above are affiliate links. We earn a commission if you sign up through them, at no extra cost to you. Affiliate revenue does not influence editorial coverage. See methodology.

The BossBlog Daily

Essential insights on AI, Finance, and Tech. Delivered every morning. No noise.

Unsubscribe anytime. No spam.

Tools mentioned

AffiliateSelected partner tools related to this topic.

AI Copilot Suite

Content drafting, summarization, and workflow automation.

Try AI Copilot →

AI Model Monitoring

Track model quality, latency, and drift with alerts.

View Monitoring Tool →

Low-fee Global Broker

Multi-market access with transparent pricing.

Open Broker Account →

Some links above are affiliate links. We earn a commission if you sign up through them, at no extra cost to you. Affiliate revenue does not influence editorial coverage. See methodology.