Memory chip makers are entering a supercycle that is reshaping the semiconductor industry, driven by insatiable AI demand for high-bandwidth memory (HBM). SK Hynix is fielding investment offers from big tech firms for dedicated production pipelines, Micron has completed the acquisition of a plant in Taiwan from Powerchip Semiconductor Manufacturing Corporation (PSMC) to boost DRAM and HBM capacity, and Samsung Electronics is accelerating construction of its P5 Fab 2 by six months, with work starting in July. The Roundhill Memory ETF surged over 30% in one week, reflecting investor conviction that the AI memory boom is not a flash in the pan but a structural shift. These moves are not incremental. They represent a coordinated, multi-billion-dollar bet that the memory shortage will persist through at least 2027. This matters now because the winners of this supercycle will determine which hyperscalers can scale AI training and inference, and which chip makers capture the windfall gains.

Where the $570M Came From: The Mechanics of HBM Pricing Power

The supercycle is fundamentally a pricing story. HBM, the specialized memory that sits next to AI accelerators like Nvidia's GPUs, commands a significant premium over standard DRAM. SK Hynix, the market leader, has seen its HBM revenue explode as hyperscalers compete for allocation. The company is now receiving investment offers from big tech firms, including cloud providers and AI startups, that want to secure dedicated production pipelines. These are not simple purchase orders. They are equity-like commitments that guarantee supply in exchange for capital. This structure creates a virtuous cycle: SK Hynix uses the cash to expand capacity, which in turn locks in customers for multi-year contracts. The pricing power is so strong that analysts at Roth project the supercycle will extend beyond 2027, a timeline that would mark the longest upcycle in memory industry history. The mechanism is straightforward: AI training clusters require exponentially more HBM per GPU, and each generation of HBM (HBM2e, HBM3, HBM4) requires more layers, more complexity, and more silicon. This drives up both unit prices and total addressable market, creating a structural shift away from the commodity-like pricing that has historically plagued DRAM and NAND. The memory makers are now capturing value that previously flowed to downstream customers, and this pricing power is the engine of the supercycle. The fact that analysts project the cycle will extend beyond 2027 suggests the market is pricing in at least three more years of structurally elevated demand, a duration that would dwarf any prior upcycle and permanently reprice the sector's earnings multiple.

How the Money Flows: P&L Impact Across the Memory Trio

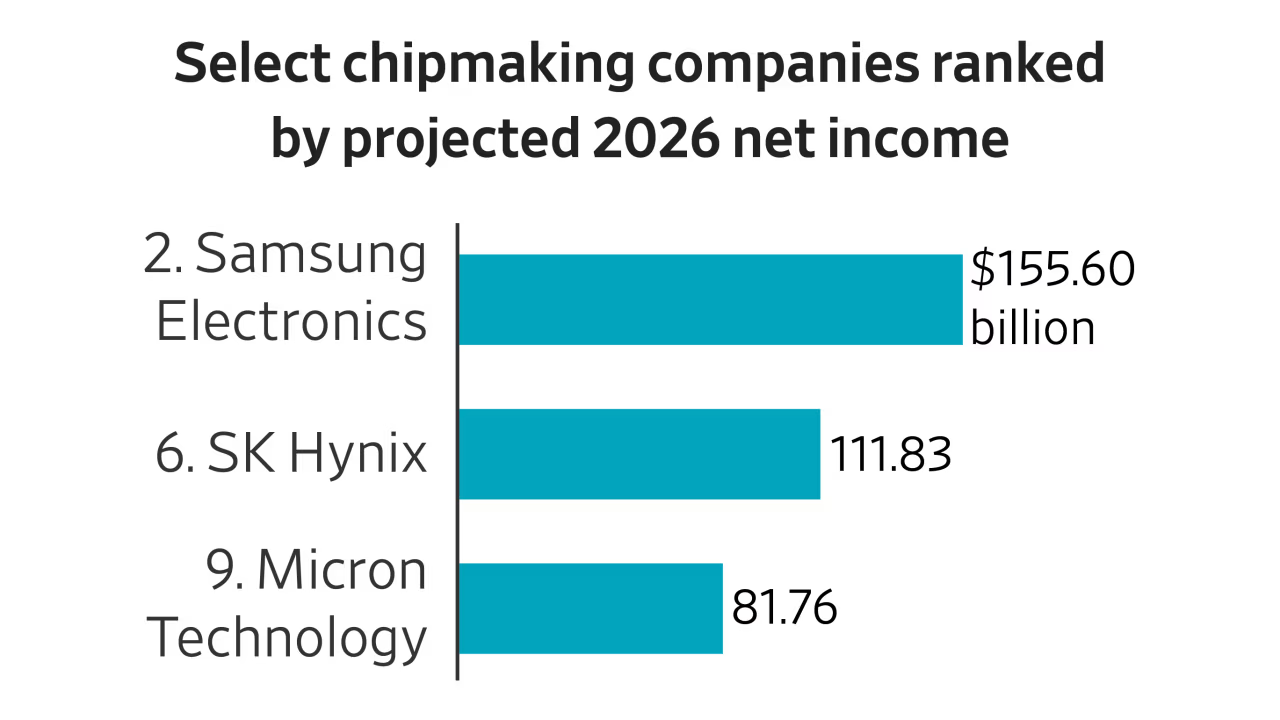

The financial implications are stark. SK Hynix, already the most profitable memory maker on an operating margin basis, is poised to generate record free cash flow as its HBM shipments ramp. The custom pipeline deals with big tech firms will effectively pre-sell capacity, reducing the risk of oversupply that has historically crashed memory prices. Micron's Taiwan acquisition from PSMC adds immediate DRAM and HBM manufacturing flexibility without the multi-year lead time of a greenfield fab. The plant, located in Taichung, will be converted to produce advanced memory nodes, giving Micron a faster path to capturing HBM demand. Samsung's decision to accelerate P5 Fab 2 by six months, with construction starting in July, is a capital allocation signal. The mega-fab, part of Samsung's Pyeongtaek campus, will focus on HBM and advanced DRAM, reinforcing the Pyeongtaek site as the company's primary vehicle for capturing the AI memory wave. The accelerated timeline means Samsung is willing to spend billions upfront to avoid missing the supercycle. The Roundhill Memory ETF's 30% weekly gain reflects this optimism, but the real test will be whether these companies can convert capacity expansion into sustainable earnings growth. The risk is that all three players overbuild, but the current demand trajectory, driven by hyperscaler capex that is rising 40% year-over-year, suggests the market can absorb the supply.

Competitive Reshuffle: Who Gains and Who Loses Share

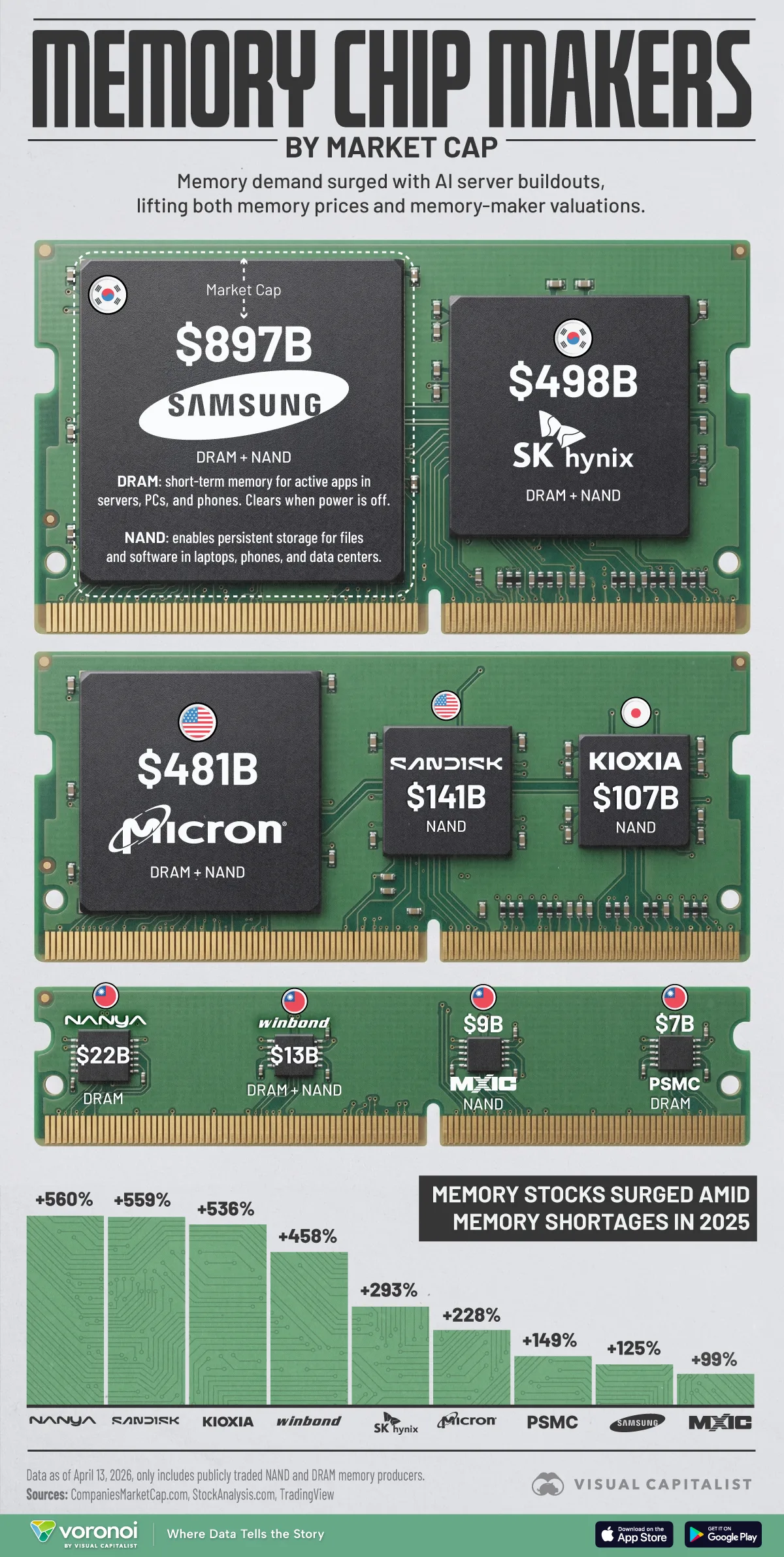

The supercycle is redrawing the competitive map. SK Hynix holds the pole position in HBM, having shipped the first HBM3 and HBM3e products to Nvidia. Its willingness to accept big tech investment offers creates a moat: customers who invest in dedicated pipelines are less likely to switch suppliers. This capital-for-supply arrangement is a structural shift from transactional procurement to strategic partnership, and it rewards incumbents with established yields and process know-how. The more equity-like commitments SK Hynix secures, the harder it becomes for Micron or Samsung to peel those customers away on price alone. Micron's Taiwan acquisition is a direct response to this dynamic. By buying PSMC's plant, Micron gains a foothold in Taiwan's advanced memory ecosystem, which is dominated by TSMC's co-packaging capabilities. This gives Micron a logistical advantage for HBM that competes with SK Hynix's Korean base and shorten the distance between memory output and the packaging lines where HBM stacks are bonded to AI accelerators. Samsung, the largest memory maker by revenue, is playing catch-up. Its P5 Fab 2 acceleration by six months, now scheduled to begin construction in July, is a capital-intensive commitment to close the HBM technology gap and reclaim share lost to SK Hynix over the past two product generations. The competitive dynamics are not zero-sum: all three players will grow revenue as the supercycle expands the total market, but the winner will be the one that can deliver the highest bandwidth per watt at the lowest cost. The losers are smaller memory makers like Nanya and Winbond, which lack the scale and technology to compete in HBM. They will be relegated to legacy DRAM and NAND markets, where pricing power is weaker and the margin profile is structurally inferior.

Downstream Shockwaves: Hyperscalers, Fabs, and Enterprise Buyers

The second-order effects are rippling through the supply chain. Hyperscalers, including Amazon, Microsoft, Google, and Meta, are the ultimate customers, and they are already adjusting their procurement strategies. The custom pipeline deals with SK Hynix signal a shift from spot purchasing to long-term strategic partnerships. This reduces price volatility but also locks in costs, which will be passed through to enterprise AI customers. On the fab side, the construction acceleration at Samsung's P5 Fab 2 will strain equipment suppliers like ASML, Applied Materials, and Tokyo Electron. These companies are already at capacity, and the incremental demand for extreme ultraviolet (EUV) lithography tools and advanced deposition equipment will create bottlenecks that extend fab completion timelines even when the investment decisions are made quickly. The Roundhill Memory ETF's weekly surge also reflects an expectation that the equipment suppliers will see their backlogs grow, creating a halo effect across the semiconductor capital equipment sector. The memory supercycle also pressures TSMC's co-packaging capacity, as HBM must be stacked and bonded to AI accelerators. TSMC is expanding its advanced packaging capacity, but the timeline is tight. Enterprise buyers of AI servers will face higher total cost of ownership as memory costs rise. A single Nvidia H200 GPU requires 141GB of HBM3e, and the memory cost can account for 30-40% of the total GPU bill. This will push enterprises to optimize utilization and extend refresh cycles, moderating near-term demand even as the structural tailwind remains intact through the end of the decade.

Policy and Strategy Signal: What the Supercycle Says About the Market

The memory supercycle is a strategic signal about the direction of the semiconductor industry. The willingness of big tech firms to invest directly in memory production pipelines represents a fundamental shift in how the supply chain is financed. Historically, memory makers built capacity on spec and suffered through boom-bust cycles that wiped out years of profit in a single quarter. Now, customers are providing capital upfront, effectively de-risking the expansion and smoothing the earnings profile for suppliers. This model, if it becomes standard, will accelerate the consolidation of memory manufacturing into the hands of the three largest players, as smaller firms cannot offer the same scale, technology, or balance sheet to absorb equity-like commitments from hyperscalers. The accelerated fab construction at Samsung and the acquisition by Micron also signal that Taiwan and Korea will remain the centers of advanced memory production, despite geopolitical risks. The U.S. CHIPS Act has not yet attracted significant memory investment, as the economics of building HBM fabs in America remain unfavorable relative to established Asian clusters where talent, equipment suppliers, and packaging infrastructure are co-located. The Roundhill Memory ETF's 30% weekly gain is the equity market's read that analysts at Roth and elsewhere are correct: the supercycle will extend beyond 2027, a timeline that would make this the longest sustained upcycle in memory industry history. The supercycle also validates the thesis that AI demand is not just about GPUs and accelerators. It is a systemic shift that touches every layer of the semiconductor stack. Memory, once a commodity vulnerable to oversupply shocks, is now a strategic asset where allocation, not price, is the primary battleground.

The supercycle will test whether the memory industry can sustain its newfound pricing power. If SK Hynix, Micron, and Samsung execute on their expansion plans, the market will absorb the supply and maintain healthy margins through 2027 — supported by a demand trajectory that no single fab program can outrun in the near term. But the risk of overbuilding remains real, especially if AI capex growth slows or if a new memory technology, such as compute-in-memory or optical interconnects, disrupts the HBM standard. The smart money is watching the hyperscalers' capital expenditure guidance as the leading indicator. For now, the memory makers are in the driver's seat, and they are not letting up. The race to meet AI demand is a marathon, but the first lap belongs to those who can build the fastest, most efficient HBM production lines. The winners will be the ones who turn this supercycle into a permanent structural advantage.

The BossBlog Daily

Essential insights on AI, Finance, and Tech. Delivered every morning. No noise.

Unsubscribe anytime. No spam.

Tools mentioned

AffiliateSelected partner tools related to this topic.

AI Copilot Suite

Content drafting, summarization, and workflow automation.

Try AI Copilot →

AI Model Monitoring

Track model quality, latency, and drift with alerts.

View Monitoring Tool →

Low-fee Global Broker

Multi-market access with transparent pricing.

Open Broker Account →

Some links above are affiliate links. We earn a commission if you sign up through them, at no extra cost to you. Affiliate revenue does not influence editorial coverage. See methodology.