The memory chip industry is entering a structural supercycle, with AI demand for high-bandwidth memory (HBM) and DRAM driving the most aggressive capacity expansion in a decade. SK Hynix is fielding direct investment offers from big tech firms seeking to lock in production pipelines, while Samsung Electronics has accelerated construction of its P5 Fab 2 mega-fab by six months, now set to break ground in July at its Pyeongtaek campus. The Roundhill Memory ETF surged more than 30% in a single week, reflecting investor conviction that this is not a cyclical uptick but a multiyear repricing of memory as an AI-essential commodity. Micron, which acquired a Taiwan plant from Powerchip Semiconductor Manufacturing Corporation (PSMC) in March, is also racing to boost DRAM and HBM output by 2028. The supercycle could extend beyond 2027, a duration that would reshape capital allocation across the semiconductor industry. Memory makers are no longer passive suppliers; they are becoming bottleneck gatekeepers for the entire AI infrastructure buildout.

Where the $570M Capacity Race Begins

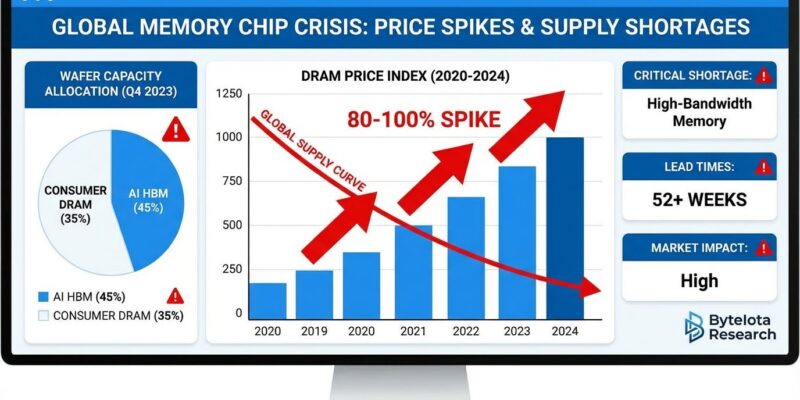

The supercycle is not a demand story alone; it is a supply-structure story. The fundamental mechanism is that AI training and inference workloads require HBM, a vertically stacked DRAM architecture that delivers dramatically higher bandwidth per watt than traditional memory. Each Nvidia H100 or B200 GPU requires multiple HBM modules, and as hyperscalers deploy clusters of 100,000-plus accelerators, the memory content per server has tripled in two years. This creates a demand profile that is both deep and persistent. SK Hynix, the dominant HBM supplier, is now fielding offers from big tech firms to invest directly in specific production pipelines, a structure that effectively pre-commits capacity years in advance and insulates SK Hynix from cyclical demand softness. Samsung's decision to accelerate P5 Fab 2 by six months is a direct response to this demand signal. The fab will produce advanced DRAM and HBM, and the timeline compression signals that Samsung sees the current order book as durable, not speculative. Micron's March acquisition of the PSMC plant in Taiwan adds immediate wafer-start capacity for DRAM, bypassing the 18-to-24-month lead time for greenfield construction. All three players are betting that the current demand curve is not a spike but a step function. Industry analysts at Roth note that lead times for HBM3E have stretched to 52 weeks, a clear sign that even the current production ramp cannot fully meet near-term commitments from AI chip customers. That supply tightness validates the investment urgency at both Samsung and SK Hynix, and it explains why Micron moved quickly to acquire the PSMC Taiwan plant rather than waiting for internal capacity to come online organically.

How the P&L Gets Rewired

The financial impact of this supercycle flows through three channels: pricing power, revenue mix, and capital efficiency. HBM modules command a 3x to 5x price premium over standard DRAM, and as HBM's share of total DRAM output rises from roughly 10% to an estimated 30% by 2027, average selling prices across the product mix will climb structurally. For SK Hynix, which derives more than half of its DRAM revenue from HBM, the margin expansion is already visible in quarterly earnings. Samsung's trillion-dollar valuation (it recently joined that club) reflects market pricing of this mix shift rather than a rerating of its legacy consumer electronics business. The accelerated P5 Fab 2 construction will require upfront capex, but the payback period shrinks when the output is pre-sold at premium prices to hyperscalers committed to multi-year supply agreements. Micron's PSMC plant acquisition was likely a cheaper and faster route to added capacity than building from scratch, improving its return on invested capital relative to Samsung and SK Hynix's greenfield bets. The Roundhill Memory ETF's 30% weekly gain captures the market's realization that memory makers are no longer cyclical commodity plays; they are AI infrastructure plays with durable pricing power. The risk is that all three players add capacity simultaneously, creating oversupply by 2028, but current order books from hyperscalers suggest demand will absorb the output through at least the end of 2027.

The Competitive Reshuffle: SK Hynix vs. Samsung vs. Micron

The supercycle is reshuffling the competitive hierarchy in memory. SK Hynix entered this cycle with a first-mover advantage in HBM, having co-developed the technology with Nvidia. That lead is now attracting direct investment offers from big tech firms, a dynamic that effectively turns SK Hynix into a captive supplier for the largest AI clusters. Samsung, historically the memory volume leader, is playing catch-up in HBM but leveraging its scale and vertical integration, supplying both memory and logic chips to hyperscalers. The accelerated P5 Fab 2 is Samsung's signal that it will not cede the HBM market to SK Hynix. Micron, the smallest of the three, is pursuing a niche strategy: acquiring existing fab capacity (the PSMC plant) to add DRAM output quickly, targeting the second tier of AI customers and enterprise deployments. The competitive dynamic is unusual because all three are expanding simultaneously, rather than the historical pattern of one player gaining share at another's expense. The market is growing fast enough to absorb all three, but the long-term winner will be the one that locks in the most hyperscaler supply agreements. SK Hynix's investment offers from big tech firms are a leading indicator that it is winning that race. The deeper implication is that memory competition is shifting from wafer cost and yield to relationship capital with AI customers. A chipmaker that secures a committed supply agreement with a hyperscaler building 300,000-plus GPU clusters effectively removes itself from the spot market and gains visibility into multi-year demand. This changes how investors should evaluate memory stocks: the metric that matters is not quarterly average selling price but rather the percentage of output under long-term contract, a figure that is rising at SK Hynix faster than at its peers.

Downstream Effects on Hyperscalers, Fabs, and Enterprise Buyers

The memory supercycle creates second-order effects across the AI supply chain. Hyperscalers (Amazon, Google, Microsoft, Meta) are the ultimate buyers, and their willingness to invest directly in SK Hynix's production pipelines signals that they view memory as a bottleneck more binding than GPU supply. This is a departure from the historical model where hyperscalers bought commodity memory on spot markets. The shift to direct investment locks in pricing and allocation, but it also means hyperscalers bear some of the capex risk if demand softens. For fab equipment makers, the accelerated Samsung P5 Fab 2 and Micron's Taiwan plant ramp mean higher orders for lithography, deposition, and test equipment, benefiting suppliers including ASML and Applied Materials. The combined equipment spend from Samsung's P5 acceleration and Micron's Taiwan ramp is substantial enough to influence quarterly order backlogs at both companies, providing a second-order equity catalyst that extends the supercycle trade beyond pure memory names. For enterprise buyers, specifically companies building on-premise AI infrastructure, the supercycle means higher memory prices and longer lead times, potentially pushing them toward cloud-based inference rather than self-built clusters. The memory makers themselves face a capacity planning dilemma: if the supercycle extends beyond 2027 as expected, they will need to start planning the next generation of fabs now, committing billions before the current wave of capacity is fully utilized. The risk of overbuilding is real, but the cost of underbuilding (losing share in the AI memory market) is higher.

What the Supercycle Signals About the Market's Future

The memory supercycle is a policy and strategy signal that the semiconductor industry has entered a new regime. Historically, memory was a cyclical commodity where prices swung 50% or more in a year. The current cycle is different because demand is driven by a single structural trend (AI infrastructure buildout) rather than the broad PC and smartphone replacement cycles that drove past booms. The fact that SK Hynix is fielding direct investment offers from big tech firms indicates that hyperscalers expect AI compute demand to grow for years, not quarters. Samsung's accelerated fab construction is a bet that the AI boom will sustain through the end of the decade. Micron's Taiwan acquisition shows that even the third player sees the opportunity as worth the geopolitical risk of operating in Taiwan. The supercycle extending beyond 2027 would mean that memory makers will have to invest through the next downturn, if one comes, to maintain capacity for the next AI wave. This is a structural shift in how the industry allocates capital: memory is no longer a cost center for the tech industry — it is a strategic asset.

The memory supercycle will likely force a revaluation of the entire semiconductor supply chain. If SK Hynix and Samsung continue to command premium pricing for HBM, the gross margin structure of the memory industry will reset permanently higher. The direct investment model, where hyperscalers fund specific fab capacity, could become the norm for other AI-essential components, from advanced packaging to silicon photonics. The risk is that the current euphoria, reflected in the 30% weekly ETF gain, leads to overinvestment and a correction in 2028 or 2029. But the structural demand from AI, a technology that is still in its early deployment phase, argues that the supercycle has legs. The critical variable to watch through the rest of 2026 is whether NAND pricing follows DRAM into a sustained upswing. NAND has lagged this cycle because AI inference does not require as much flash storage as it requires HBM, but as AI model sizes grow and inference volumes compound, fast storage for model weights and activation caches will become a secondary bottleneck. If NAND pricing firms in the second half of 2026, it would confirm that the supercycle is broadening beyond HBM into the full memory stack. The memory makers that lock in hyperscaler partnerships now will be the ones that define the next decade of AI infrastructure.

The BossBlog Daily

Essential insights on AI, Finance, and Tech. Delivered every morning. No noise.

Unsubscribe anytime. No spam.

Tools mentioned

AffiliateSelected partner tools related to this topic.

AI Copilot Suite

Content drafting, summarization, and workflow automation.

Try AI Copilot →

AI Model Monitoring

Track model quality, latency, and drift with alerts.

View Monitoring Tool →

Low-fee Global Broker

Multi-market access with transparent pricing.

Open Broker Account →

Some links above are affiliate links. We earn a commission if you sign up through them, at no extra cost to you. Affiliate revenue does not influence editorial coverage. See methodology.