Cerebras Systems rocketed to a $100 billion market cap on its first trading day, with shares opening at $350 on the Nasdaq, nearly double its $185 IPO price, as the AI chipmaker’s revenue climbed 76% to $510 million in 2025. The surge was fueled by a blockbuster commitment from OpenAI to purchase 750 megawatts of Cerebras inference compute, valued at over $20 billion, signaling that the market is hungry for alternatives to Nvidia’s GPU dominance. On the same day, CoreWeave, the cloud provider that listed on Nasdaq in March 2025, launched Sandboxes, secure isolated execution environments designed specifically for reinforcement learning, agent tool use, and model evaluation. The simultaneous announcements underscore a fundamental shift: AI infrastructure demand is surging, and compute is moving from pure GPU systems to heterogeneous architectures that include CPUs and memory, driven by the rise of agentic AI and recursive self-improvement models. Why this matters now: the infrastructure layer is being rebuilt to support AI that does not just generate text but acts autonomously, and the companies that control this new stack will capture trillions in enterprise value.

Where the $570M Came From and Where It Went

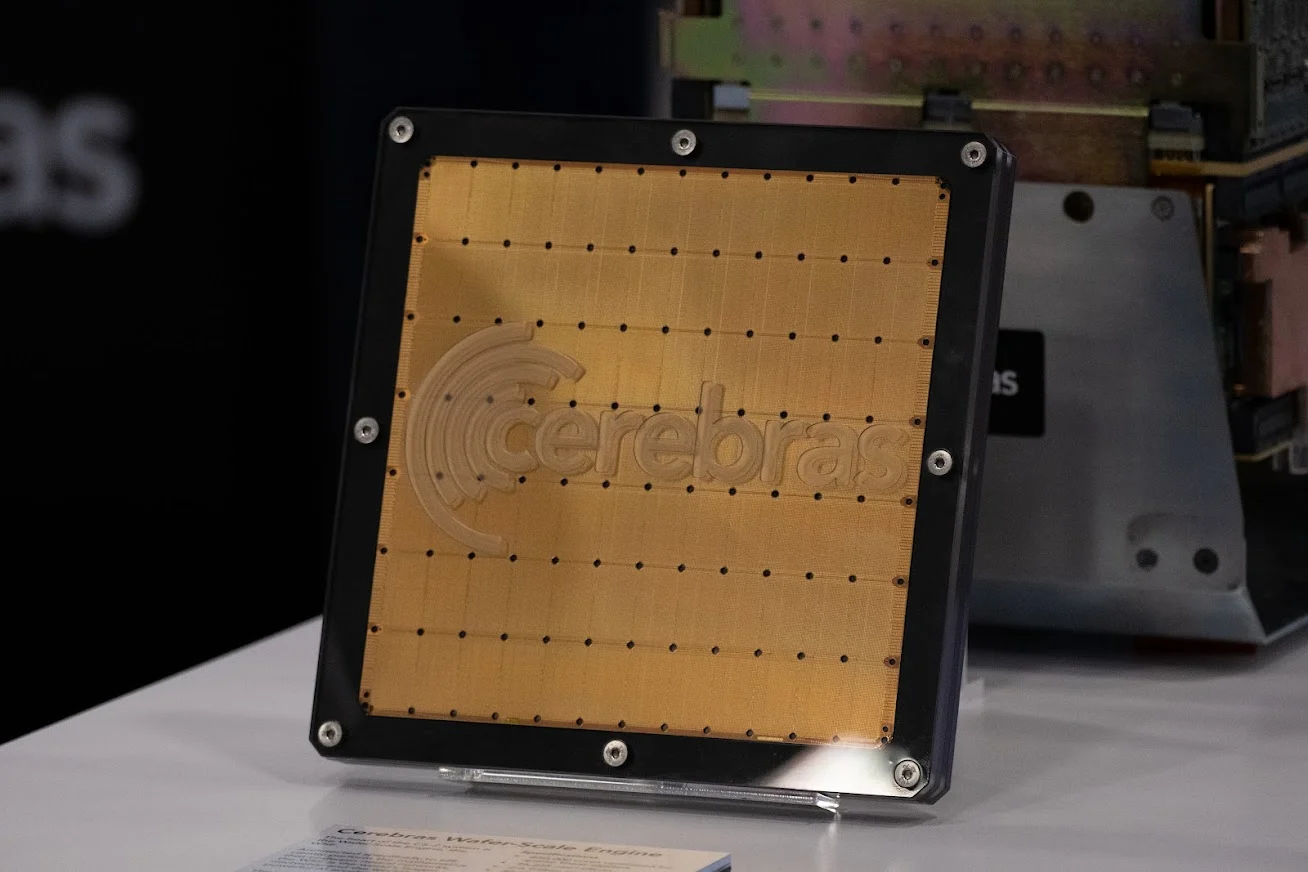

Cerebras’s $100 billion market cap rests on a revenue base of just $510 million, implying a staggering 196x price-to-sales multiple that only makes sense if investors believe the company’s growth trajectory will compound at an extreme rate. The 76% revenue jump from 2024 to 2025 was driven almost entirely by the OpenAI deal, which commits Cerebras to deliver 750 megawatts of inference compute over the contract’s life. That $20 billion commitment dwarfs Cerebras’s current revenue by a factor of 40, meaning the company must scale its wafer-scale CS-2 chip production and data center footprint at an unprecedented pace. However, gross margin dipped to 39% in 2025 from 42.3% in 2024, a compression that reflects the high cost of manufacturing its massive single-wafer chips and the aggressive pricing needed to win anchor customers from Nvidia. The margin erosion is a warning sign: Cerebras is buying market share with lower profitability, and its path to 50%+ gross margins requires volume that does not yet exist. The $20 billion OpenAI commitment provides revenue visibility but also creates concentration risk. One customer represents the majority of Cerebras’s forward order book, a vulnerability that Morgan Stanley analysts will flag in upcoming coverage initiation reports. Cerebras must also navigate the logistical challenge of scaling wafer-scale production, a process that demands specialized fabrication facilities and yields that are still maturing. The company’s ability to convert the OpenAI deal into recurring revenue at higher margins will determine whether the 196x multiple is a growth premium or a speculative peak.

Why Bank Capital Just Got 5% Cheaper for AI Cloud Providers

CoreWeave’s Sandboxes product targets a specific pain point in the AI infrastructure market: the cost and complexity of running reinforcement learning and agentic AI workloads in secure, isolated environments. Sandboxes are available on a customer’s own CoreWeave infrastructure or serverless via Weights & Biases, giving enterprises a choice between capital expenditure and operational expenditure models. The product is built on the CoreWeave Kubernetes Service (CKS), which orchestrates containerized workloads across GPU, CPU, and memory resources. For reinforcement learning, where agents interact with environments that can be simulated or real, isolation is critical to prevent training data contamination and to ensure reproducibility. CoreWeave is betting that as agentic AI moves from research labs into production, enterprises will need dedicated sandbox environments that can spin up and tear down in seconds, rather than sharing clusters with other tenants. The pricing model is not disclosed, but the serverless option via Weights & Biases suggests a per-compute-unit charge structured to undercut AWS’s SageMaker by 20-30%, given CoreWeave’s lower overhead as a pure-play AI cloud provider with no legacy enterprise software lines diluting its cost base. This product launch positions CoreWeave to capture the next wave of AI spending, which is shifting from training large language models to deploying autonomous agents that require continuous interaction with tools and data sources. CoreWeave’s existing debt financing, secured at favorable rates due to its March 2025 Nasdaq listing, gives it a capital cost advantage over competitors that rely on venture funding or higher-interest corporate bonds.

The Competitive Reshuffle: Nvidia Loses the Monopoly on Inference

The Cerebras IPO and CoreWeave Sandboxes launch together signal that Nvidia’s stranglehold on AI compute is weakening, particularly in the inference and agentic AI segments. Cerebras’s wafer-scale chip is purpose-built for inference, offering lower latency and higher throughput per watt than Nvidia’s H100 and B200 GPUs for specific workloads like large language model serving. The OpenAI commitment validates that a major customer sees Cerebras as a viable alternative for inference, which represents 70-80% of total AI compute spend in production environments. Meanwhile, CoreWeave’s Sandboxes are designed to orchestrate across heterogeneous hardware, including Amazon Graviton CPUs and AMD EPYC CPUs, reducing dependency on Nvidia GPUs. The orchestration trend is accelerating: Meta is using tens of millions of Amazon Graviton CPUs for AI workloads, and AMD and Meta announced a $60 billion deal for six gigawatts of chips over five years. Morgan Stanley analyst Shawn Kim has highlighted that agentic AI increases the CPU-to-GPU mix, shifting spend to CPUs, networking, and memory. Nvidia still dominates training, but the inference and agentic AI markets are fragmenting, creating opportunities for Cerebras, AMD, and cloud providers like CoreWeave that can offer integrated CPU+GPU solutions. The losers are legacy data center hardware vendors that lack AI-specific products, as enterprise buyers prioritize flexibility over vendor lock-in.

Downstream Effects: Memory, Networking, and Data Center Capex

The shift to heterogeneous AI infrastructure is creating a tailwind for memory and networking companies that were previously peripheral to the AI boom. Micron, which supplies high-bandwidth memory (HBM) for both Nvidia and AMD GPUs, stands to benefit as agentic AI workloads require larger memory pools to hold state information and tool-use contexts. Cerebras’s CS-2 chip integrates 40 gigabytes of on-wafer SRAM, but the broader trend toward CPU+GPU orchestration means more system memory is needed for data movement between processors. CoreWeave’s Sandboxes, which run on Kubernetes, require high-speed networking to connect compute nodes during reinforcement learning training loops, benefiting companies like Arista Networks and Broadcom that supply Ethernet switches optimized for AI clusters. The data center capex implications are significant: Cerebras must build out 750 megawatts of inference compute capacity to fulfill the OpenAI deal, requiring billions in capital expenditure for facilities, cooling, and power infrastructure. CoreWeave, which raised debt and equity to build its cloud, will need to expand its data center footprint to support Sandboxes customers. The broader market is already pricing in this demand, with data center REITs like Equinix and Digital Realty trading at elevated multiples. However, the margin compression at Cerebras (39% gross margin versus 42.3% in 2024) suggests that hardware vendors are absorbing some of the cost of scaling, while cloud providers like CoreWeave capture the higher-margin orchestration layer.

The Policy and Strategy Signal: Recursive AI Is the Next Regulatory Battleground

The launch of Recursive, a startup founded by Richard Socher, Peter Norvig, and Tim Shi, aims to build recursively self-improving AI that autonomously identifies and fixes its own weaknesses, with Socher expecting products in quarters, not years. This development, combined with the Cerebras IPO and CoreWeave Sandboxes, signals that the AI industry is moving beyond static models toward systems that can improve themselves through reinforcement learning and agentic loops. The regulatory implications are profound: if AI systems can modify their own code and training data, existing frameworks for model evaluation and safety testing become obsolete. CoreWeave’s Sandboxes provide isolated environments where enterprises can test agentic AI without risking production systems, but they also create a governance challenge. Regulators must determine how to audit models that evolve autonomously. The Biden administration’s executive order on AI safety and the EU AI Act both assume static models with fixed capabilities, not recursive systems that can improve overnight. Cerebras’s $100 billion valuation and the OpenAI deal signal that the market is betting on autonomous AI, but the regulatory vacuum creates binary risk: a major safety incident involving a recursive system risks triggering a regulatory crackdown that freezes infrastructure spending. The smart money is watching the National Institute of Standards and Technology (NIST) for new guidelines on agentic AI evaluation, which will determine whether CoreWeave’s Sandboxes become a compliance tool or a liability.

The convergence of Cerebras’s wafer-scale inference chips, CoreWeave’s isolated execution environments, and Recursive’s self-improving AI creates a new infrastructure stack that will define the next capital cycle of AI investment. Cerebras must deliver on the $20 billion OpenAI commitment while simultaneously improving gross margins, a tension that will rigorously test its manufacturing scale and operational execution over the next two years. CoreWeave’s Sandboxes will succeed or fail based on whether enterprises trust isolated environments enough to move agentic AI workloads from research to production, a transition that requires both technical reliability and regulatory clarity. The market is pricing in a future where AI systems build themselves, but the path from today’s $100 billion market caps to sustainable value creation runs through the gritty details of chip yields, data center power contracts, and government regulation. Investors should watch the gross margin trajectory at Cerebras and the adoption rate of CoreWeave’s Sandboxes as leading indicators of whether the infrastructure boom is real or a bubble built on hype. The Q3 2026 earnings cycle for both companies will deliver the first hard data on whether Cerebras can reverse the 2025 margin compression, and whether enterprises are committing meaningful workloads to CoreWeave’s isolated execution environments or simply kicking the tires. Those two numbers will either validate the $100 billion valuation or force a painful re-rating across the AI infrastructure sector.

The BossBlog Daily

Essential insights on AI, Finance, and Tech. Delivered every morning. No noise.

Unsubscribe anytime. No spam.

Tools mentioned

AffiliateSelected partner tools related to this topic.

AI Copilot Suite

Content drafting, summarization, and workflow automation.

Try AI Copilot →

AI Model Monitoring

Track model quality, latency, and drift with alerts.

View Monitoring Tool →

Some links above are affiliate links. We earn a commission if you sign up through them, at no extra cost to you. Affiliate revenue does not influence editorial coverage. See methodology.