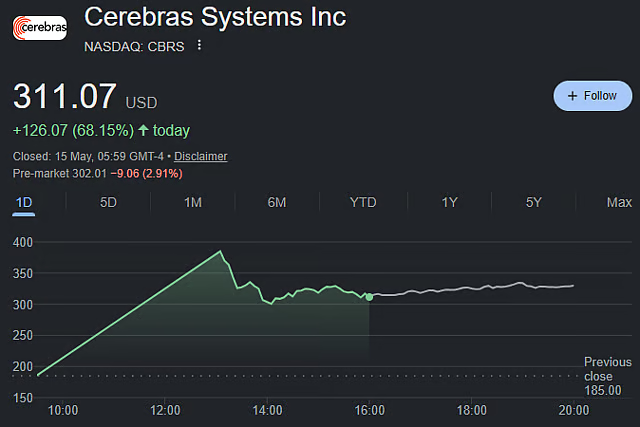

CoreWeave today launched CoreWeave Sandboxes, an execution layer for secure, isolated environments designed to accelerate reinforcement learning, agent tool use, and model evaluation, while across the Atlantic Cerebras Systems saw its stock nearly double on its Nasdaq debut, opening at $350 per share against a $185 IPO price and pushing its market capitalization above $100 billion. The two events, though superficially disconnected, converge on a single thesis: the AI infrastructure market is undergoing a structural shift away from pure GPU compute toward orchestration, memory, and specialized silicon. CoreWeave Sandboxes is available on a customer's own CoreWeave infrastructure or serverless through Weights & Biases, with access models spanning on-cluster via CoreWeave Kubernetes Service and serverless via W&B. Cerebras, meanwhile, has locked in partnerships with OpenAI and Amazon Web Services, with OpenAI committing to purchase 750 megawatts of inference compute valued at over $20 billion, with an option for an additional 1.25 gigawatts. These developments matter now because they signal that the next phase of AI infrastructure competition will be defined not by who builds the biggest GPU cluster, but by who can orchestrate heterogeneous compute, secure agentic workloads, and deliver inference at scale.

Where the $570M Came From: CoreWeave Sandboxes as an Execution Layer

CoreWeave Sandboxes is not a GPU product. It is an execution layer that creates secure, isolated environments for reinforcement learning, agent tool use, and model evaluation — precisely the workloads that are growing fastest as AI moves from training to deployment. The product is available on a customer's own CoreWeave infrastructure or serverless through Weights & Biases, with access models including on-cluster via CoreWeave Kubernetes Service and serverless via W&B. This dual-access model is critical: it allows enterprises to run sensitive agentic workloads in their own tenant while also enabling rapid prototyping through a managed service. The timing is deliberate. As agentic AI proliferates, the need for sandboxed execution environments that prevent models from executing arbitrary code or accessing production systems has become acute. CoreWeave is positioning Sandboxes as the security and orchestration layer for this new class of workloads, directly competing with offerings from AWS, Google Cloud, and Azure that have been slower to isolate agentic execution from training infrastructure. The product also addresses a growing pain point for reinforcement learning pipelines, where training and evaluation environments must be tightly controlled to prevent data leakage or unintended model behaviors. By offering Sandboxes as a standalone product rather than a feature bundled into a larger platform, CoreWeave is making a bet that enterprises will pay a premium for secure, isolated compute environments rather than building them in-house. The product supports both on-cluster deployment through CoreWeave Kubernetes Service and serverless access via Weights & Biases, giving enterprises flexibility in how they integrate sandboxed execution into existing workflows. This dual-access model allows teams to prototype rapidly through a managed service while maintaining sensitive workloads in their own tenant for compliance and security.

How the Money Flows: Cerebras Revenue and Margin Mechanics

Cerebras reported revenue of $510 million in 2025, up 76% year-over-year, but gross margin dipped to 39% from 42.3% in 2024. The margin compression is a direct consequence of the company's pivot from selling hardware to selling inference-as-a-service. The OpenAI deal, valued at over $20 billion for 750 megawatts of inference compute with an option for an additional 1.25 gigawatts, shifts Cerebras from a capital-intensive hardware vendor to a recurring revenue infrastructure provider. This transition is expensive: building out the wafer-scale clusters required to deliver that compute consumes cash and depresses near-term margins. However, the revenue visibility is extraordinary. A $20 billion committed deal from a single customer provides a multi-year revenue backlog that justifies the $100 billion market capitalization. The math works if Cerebras can maintain or improve utilization rates on its CS-2 systems while expanding gross margins through software optimization and scale. The company's gross margin of 39% compares unfavorably to Nvidia's 70%+ margins, but Cerebras is not selling chips; it is selling compute time. The margin structure more closely resembles a cloud provider than a semiconductor company, and the path to 50%+ gross margins runs through utilization and software efficiency, not chip pricing power.

Competitive Reshuffle: Nvidia, AMD, and the Orchestration War

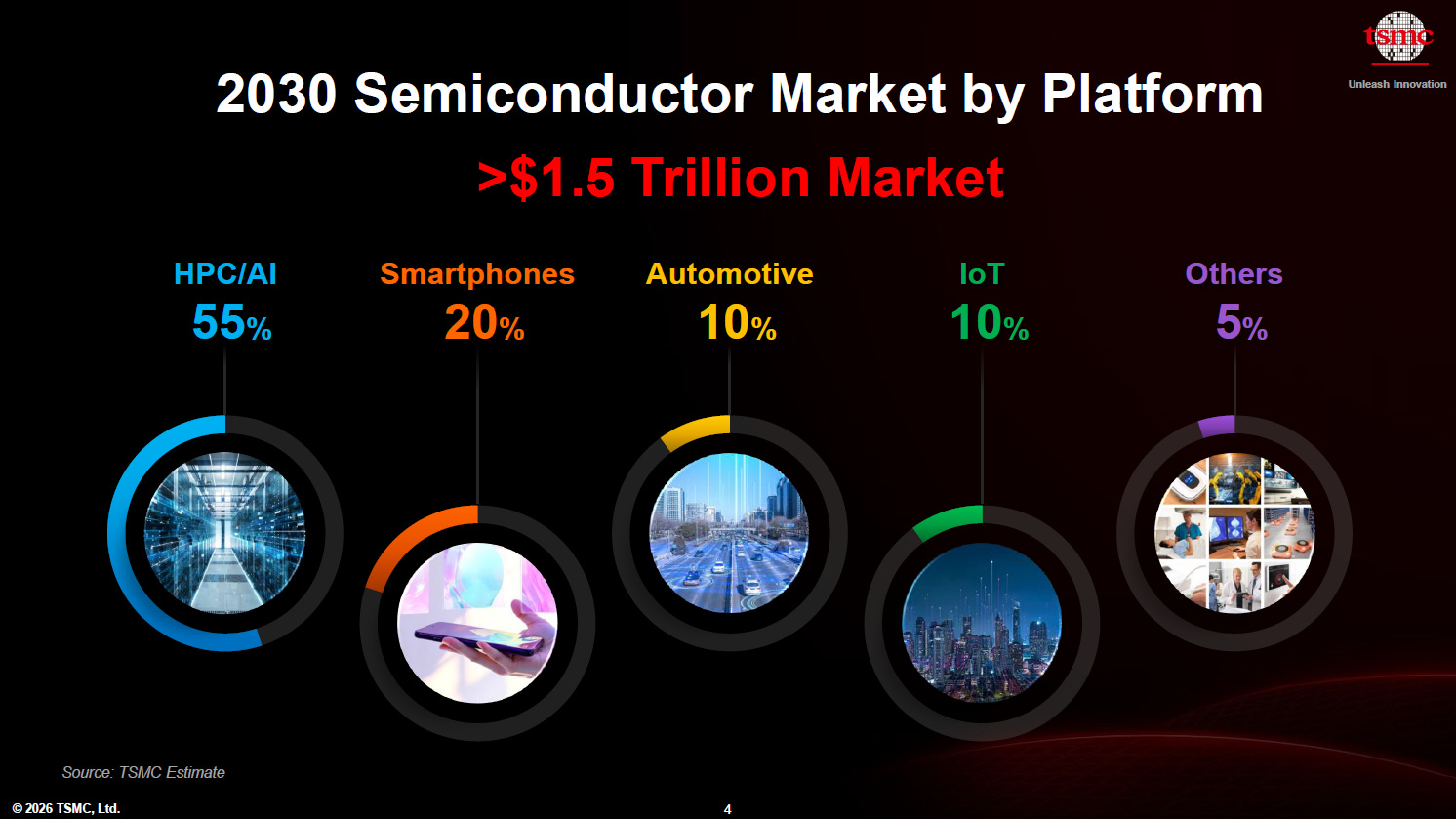

The shift toward agentic AI and inference workloads is reshaping the competitive landscape in ways that benefit Cerebras and CoreWeave while pressuring Nvidia's dominance. Morgan Stanley analyst Shawn Kim notes that orchestration increases system complexity, and agentic AI drives a higher CPU-to-GPU mix, shifting spend toward CPUs, networking, and memory. Meta is already using tens of millions of Graviton CPUs from AWS, and AMD has a $60 billion deal with Meta for 6 gigawatts of chips over five years. This is not a GPU replacement story; it is a compute diversification story. Cerebras wins because its wafer-scale architecture is optimized for inference, not training, and the OpenAI deal validates that thesis at a scale that no other alternative chipmaker has achieved. CoreWeave wins because Sandboxes addresses a security and orchestration gap that Nvidia's CUDA ecosystem does not fill. Nvidia remains the dominant training platform, but as inference workloads grow from 20% of AI compute to an expected 60% by 2028, the center of gravity shifts toward companies that can deliver low-latency, secure, and cost-effective inference at scale. AMD's $60 billion Meta deal is a direct challenge to Nvidia's data center GPU business, but it also signals that hyperscalers are willing to commit massive capital to non-Nvidia silicon if the price-performance equation works.

Downstream Effects: Hyperscalers, Fabs, and Enterprise Buyers

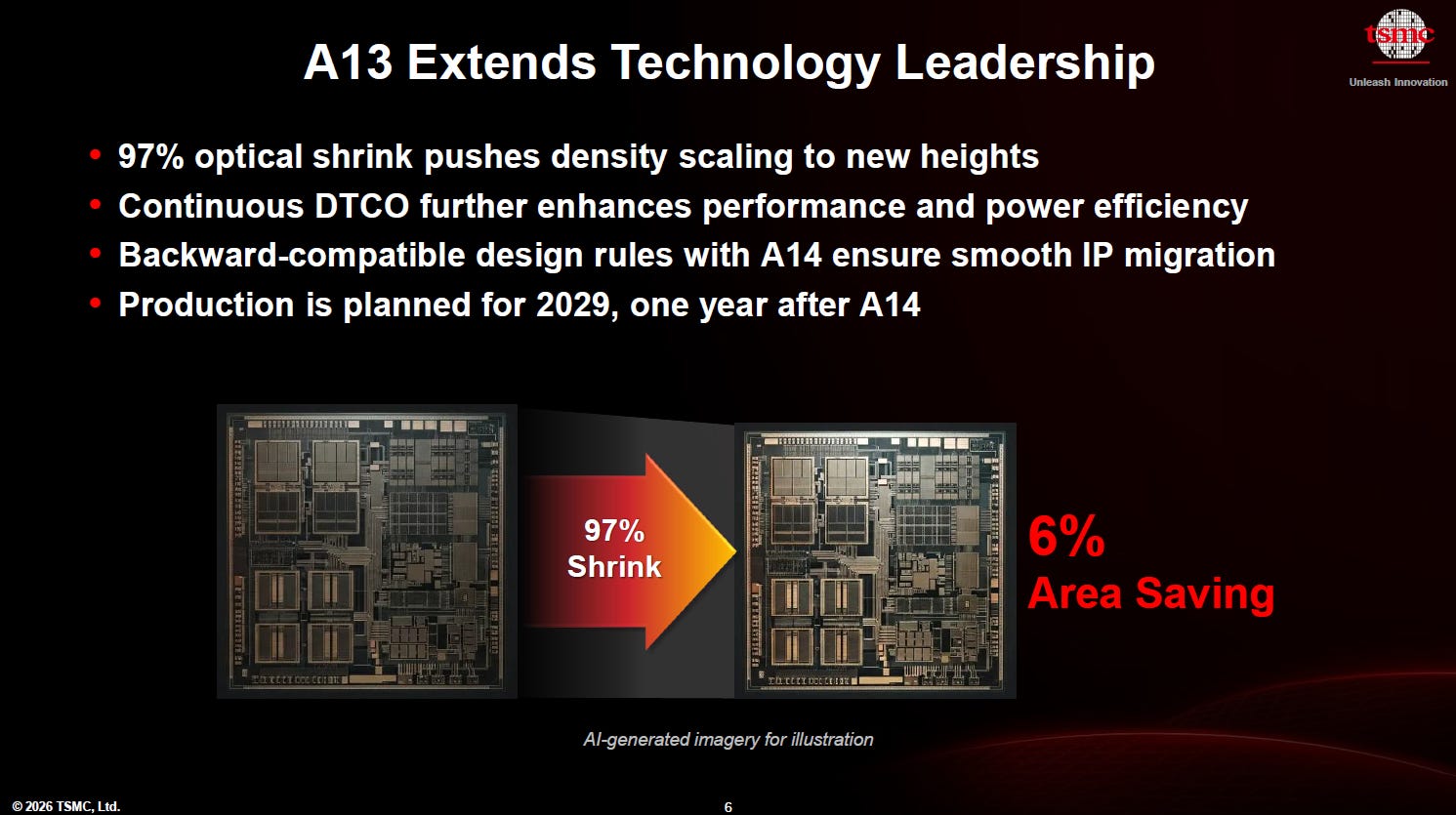

The downstream implications of these developments are significant for hyperscalers, semiconductor fabs, and enterprise buyers. AWS is a direct beneficiary of both the Cerebras and CoreWeave stories: it hosts Cerebras inference clusters and supplies Graviton CPUs to Meta, positioning itself as the neutral infrastructure layer for heterogeneous AI compute. OpenAI's $20 billion commitment to Cerebras creates a new demand vector for wafer-scale silicon, which in turn drives fab capacity allocation at TSMC. Cerebras CS-2 systems require advanced packaging and wafer-level integration, consuming fab capacity that might otherwise go to Nvidia or AMD GPUs. For enterprise buyers, the CoreWeave Sandboxes launch signals that secure agentic AI execution is becoming a productized service rather than a DIY engineering project. Enterprises evaluating AI infrastructure now face a trilemma: build on Nvidia for training, use Cerebras for inference, and deploy CoreWeave Sandboxes for agentic workloads. This fragmentation creates integration complexity but also pricing leverage. The $30 billion funding deal for Anthropic at a $900 billion valuation, reported by the FT, underscores the scale of capital flowing into AI infrastructure. The AMD deal with Meta for 6 gigawatts of chips at $60 billion over five years reinforces that hyperscalers are executing infrastructure bets spanning multiple silicon generations; once a hyperscaler has built multi-gigawatt capacity around a specific architecture, migrating to an alternative requires years of re-tooling and re-certification. Big Tech groups are launching a global borrowing spree to fund AI expansion, and the winners will be the infrastructure providers that can deliver the lowest total cost of ownership for inference, not just raw training throughput.

Policy and Strategy Signal: What the Market Is Telling Us

The simultaneous emergence of CoreWeave Sandboxes and the Cerebras IPO sends a clear signal about where the AI infrastructure market is heading. The market is moving away from a single-vendor GPU monoculture toward a multi-architecture, multi-provider ecosystem where orchestration and security are as important as raw compute. Cerebras' $100 billion market capitalization, achieved on the back of a $185 IPO price that nearly doubled on day one, represents a bet that alternative chip architectures can capture a meaningful share of the inference market. CoreWeave's Sandboxes launch represents a bet that the next competitive battleground is not hardware but software: specifically, the execution layer that governs how agents interact with tools, data, and production systems. The policy signal is equally important: regulators and enterprise risk officers are demanding secure, auditable AI execution environments, and CoreWeave is positioning Sandboxes as the compliance-friendly answer. The $900 billion valuation for Anthropic and the $30 billion funding round indicate that the market believes AI infrastructure will continue to grow at a compound rate that justifies current valuations. The question is not whether the market will grow, but which companies will capture the margin. Morgan Stanley analyst Shawn Kim has framed this shift as the orchestration era, a period where managing heterogeneous compute environments becomes the primary competitive differentiator, not raw processing power. Enterprise procurement teams that built frameworks around Nvidia H100 allocations are now evaluating wafer-scale inference systems, CPU-heavy agentic configurations, and sandboxed execution layers as separate budget categories with distinct procurement cycles. The policy dimension is sharpening: regulators in the EU and the US are beginning to scrutinize AI infrastructure concentration, and the emergence of alternative chipmakers like Cerebras is viewed favorably by policymakers concerned about single-vendor systemic risk in critical AI workloads. Cerebras and CoreWeave are both making bets that the answer is not Nvidia.

The convergence of these trends points to a market structure that looks more like the early days of cloud computing than the GPU gold rush of 2023–2025. Just as AWS, Azure, and Google Cloud competed on orchestration, security, and ecosystem lock-in rather than raw server performance, the next phase of AI infrastructure competition will be defined by who can manage heterogeneous compute, secure agentic workloads, and deliver inference at the lowest total cost. Cerebras has the inference architecture and the OpenAI anchor deal; CoreWeave has the execution layer and the Weights & Biases integration; Nvidia has the training dominance and the CUDA moat. The winners will be determined not by who builds the fastest chip, but by who builds the most efficient system for the workloads that matter. For enterprise buyers and investors, the takeaway is clear: the AI infrastructure market is diversifying, and the next billion-dollar opportunities will come from orchestration, security, and inference at scale, not from another GPU.

The BossBlog Daily

Essential insights on AI, Finance, and Tech. Delivered every morning. No noise.

Unsubscribe anytime. No spam.

Tools mentioned

AffiliateSelected partner tools related to this topic.

AI Copilot Suite

Content drafting, summarization, and workflow automation.

Try AI Copilot →

AI Model Monitoring

Track model quality, latency, and drift with alerts.

View Monitoring Tool →

Some links above are affiliate links. We earn a commission if you sign up through them, at no extra cost to you. Affiliate revenue does not influence editorial coverage. See methodology.