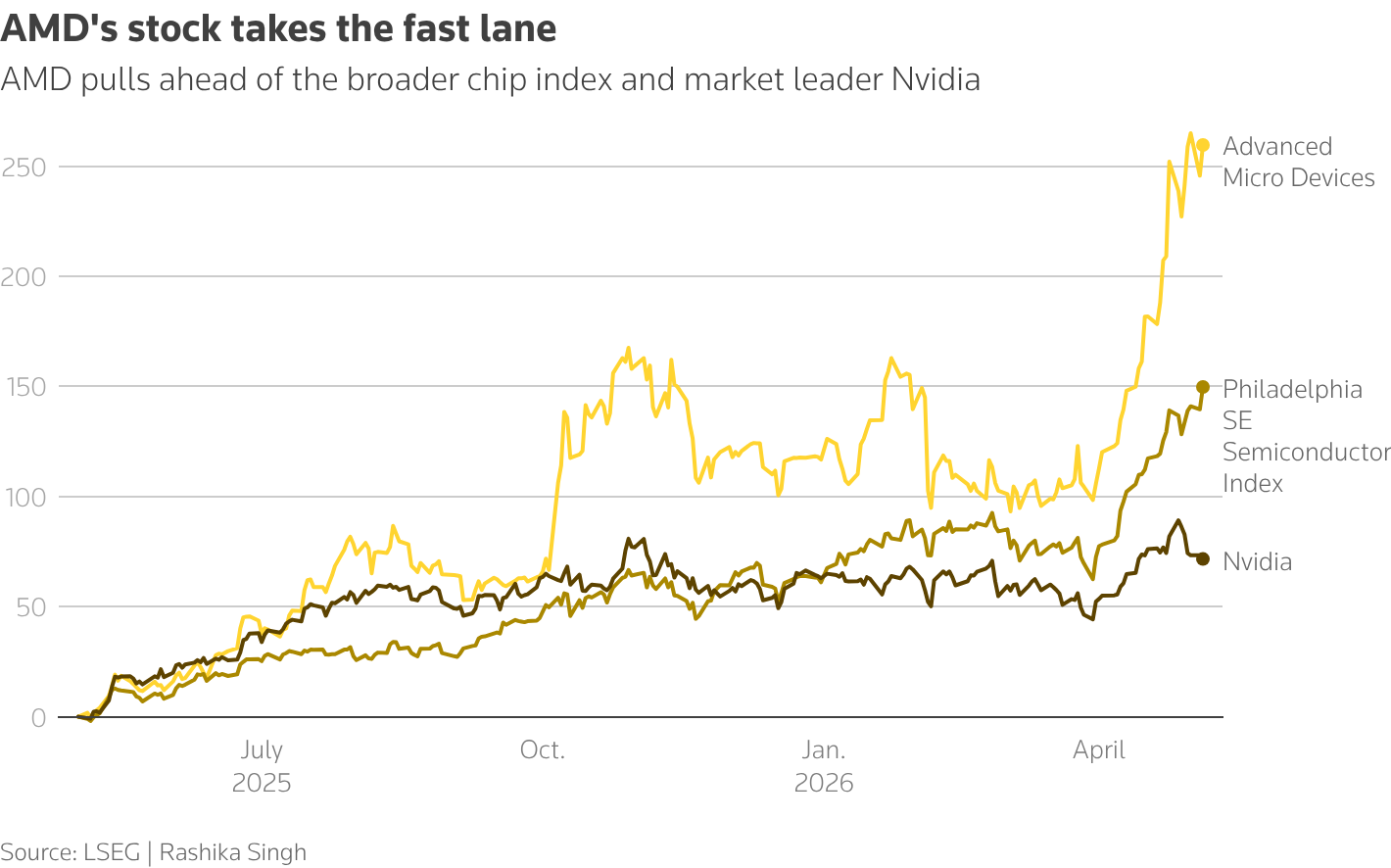

Nvidia's forward enterprise value-to-EBITDA ratio of 18.23 sits at a striking discount to the 60-70% growth rates the company is projected to deliver, creating a valuation mismatch that has Goldman Sachs maintaining a buy rating with a 12-month price target of $250 based on a 30x price-to-earnings multiple. The chipmaker spent $6.1 billion on capital expenditures in fiscal 2026 and plans to increase that figure in fiscal 2027, even as competition from Amazon's Trainium chips and Alphabet's TPUs intensifies. Meanwhile, AMD shares hit a record high, sparking a global chip rally that lifted ASML 5.7% and ASMI 3.5%, while Super Micro surged 14.3% after forecasting fourth-quarter revenue and profit above expectations. The S&P 500 semiconductor index is expected to post 109.8% earnings growth for the first quarter. This divergence between Nvidia's moderate multiple and its explosive growth trajectory, set against a backdrop of rising in-house chip development by hyperscalers, defines the central tension in AI infrastructure investing today.

The valuation mismatch between Nvidia's multiple and its growth trajectory

Nvidia trades at 18.23 times forward EV/EBITDA, a multiple that would typically correspond to a company growing at 15-20%, not the 60-70% expansion analysts project. This compression reflects market skepticism about the durability of Nvidia's AI chip monopoly as hyperscalers develop their own silicon. Goldman Sachs applies a 30x P/E multiple to arrive at a $250 price target, implying the bank sees the current discount as temporary. The company's $6.1 billion in fiscal 2026 capex, with an increase planned for fiscal 2027, signals management's confidence that demand will absorb additional supply. Yet the forward multiple suggests investors are pricing in a mean reversion that has not materialized. If Nvidia delivers on its growth projections, the stock would trade at roughly 11x forward EBITDA on current prices. That valuation would make it one of the cheapest high-growth tech names in the market. The gap between the multiple and the growth rate creates a binary outcome: either Nvidia stumbles and the multiple was correct, or it sustains growth and the stock has significant upside. Goldman Sachs is betting on the latter, but the market is demanding proof that Nvidia can defend its turf against Amazon and Alphabet's custom silicon.

How the $6.1 billion capex bet flows through Nvidia's P&L

Nvidia's $6.1 billion in fiscal 2026 capital expenditures, with an increase planned for fiscal 2027, represents a direct bet that AI infrastructure demand will outpace any competitive erosion from Amazon's Trainium chips or Alphabet's TPUs. This capex funds additional fab capacity, packaging capacity for its B300 chips, and data center buildout. The spending flows through the P&L in two ways: depreciation drags on net income in the near term, while the additional supply enables revenue growth that will more than offset the depreciation charge. At 60-70% revenue growth, Nvidia's incremental gross margin on each additional dollar of revenue far exceeds the depreciation cost of the capex that enables it. The company's operating leverage means that even if competitive pressures force modest price reductions, the volume growth from expanded capacity sustains margin expansion. The capex increase also signals to investors that Nvidia sees a multi-year demand cycle, not a one-time spike. If the company were worried about Amazon or Alphabet displacing its chips in their own data centers, it would not be pouring billions into capacity that could become stranded. The capex decision is the strongest signal that Nvidia's management believes the total addressable market for AI chips is expanding faster than any single competitor can capture.

AMD, Super Micro, and the broadening of the AI server market

AMD's record-high share price and Super Micro's 14.3% surge after forecasting above-consensus fourth-quarter revenue and profit mark a structural shift in AI infrastructure procurement. The S&P 500 semiconductor index's expected 109.8% first-quarter earnings growth confirms that demand is spreading beyond Nvidia's dominant GPU platform. Super Micro CEO Charles Liang has positioned the company to capture enterprise customers who want customized AI server configurations rather than Nvidia's reference architectures. Dell and Hewlett Packard Enterprise are also competing for this business, creating a multi-vendor ecosystem that benefits AMD's MI-series chips. The broadening market reduces Nvidia's pricing power but expands the total revenue pool for chipmakers and server manufacturers. Super Micro's ability to forecast above-expectation results suggests that enterprise AI adoption is accelerating, not just hyperscaler spending. This dynamic benefits ASML and ASMI, whose lithography and deposition equipment are essential for all advanced chip production, regardless of architecture. ASML's 5.7% gain and ASMI's 3.5% rise on the same day as AMD's record reflect the market's recognition that the AI chip boom is becoming a rising tide that lifts all semiconductor capital equipment makers, not just Nvidia.

SK Hynix's more than 100% share price gain in 2026 illustrates the same dynamic from the memory side. High-bandwidth memory stacks are as supply-constrained as advanced GPU nodes, and both Nvidia and AMD's MI-series chips require HBM at scale. With only Samsung and SK Hynix at production volumes capable of meeting AI demand, memory pricing and availability shape AI infrastructure deployment timelines as directly as GPU allocation does. Super Micro CEO Charles Liang's above-consensus fourth-quarter guidance confirms that enterprise buyers are committing multi-year AI server budgets, not running pilot programs, which means sustained HBM procurement regardless of which GPU architecture they select.

The hyperscaler in-house chip threat and the supply chain response

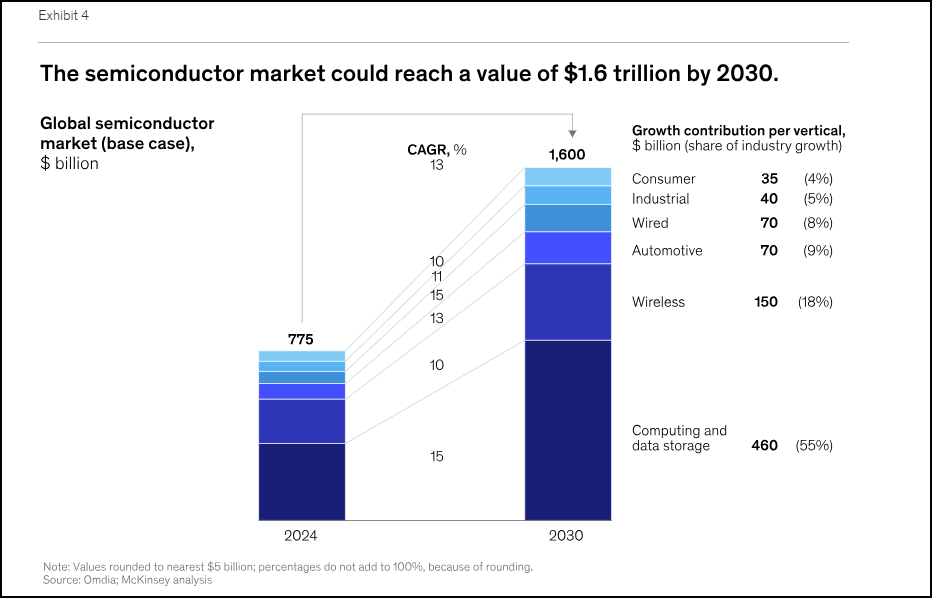

Amazon's Trainium chips and Alphabet's TPUs represent the most direct competitive threat to Nvidia's data center dominance, but their impact on the supply chain is more nuanced than a simple market share shift. Both Amazon and Alphabet design their own chips but rely on the same foundry capacity at Taiwan Semiconductor Manufacturing Co. that Nvidia uses. This creates a capacity allocation battle where Nvidia's willingness to pay premium prices for wafer starts gives it an advantage over its own customers. The hyperscalers' in-house chips also require advanced packaging, high-bandwidth memory from Samsung Electronics and SK Hynix, and server integration from companies like Super Micro and Dell. Samsung Electronics reaching a $1 trillion market value and SK Hynix more than doubling this year demonstrates that the AI memory boom is independent of which company wins the GPU architecture battle. The supply chain benefits from multiple chip designs competing for capacity, as it drives higher total wafer starts and memory bit shipments than a single dominant architecture would. China's inability to access EUV lithography from ASML limits its ability to produce competitive AI chips, as DeepSeek and other Chinese AI companies cannot manufacture advanced semiconductors domestically. This constraint reinforces the US advantage in data, computing access, chips, and talent, ensuring that the competitive dynamics among Nvidia, AMD, Amazon, and Alphabet play out primarily in Western markets.

Taiwan Semiconductor's dominance in advanced node manufacturing means that both Nvidia's B300 chips and Amazon's Trainium accelerators compete for allocation at the same 3nm and 4nm fabs. This dependency constrains all parties' ability to scale rapidly and explains why Nvidia's multi-year wafer purchase agreements create a structural procurement advantage that Amazon's in-house teams cannot quickly replicate. The competition between Nvidia and hyperscaler custom silicon is partly a race to secure TSMC capacity, not only a race to design the best architecture.

What the valuation gap signals about AI infrastructure's next phase

Nvidia's 18.23x forward EV/EBITDA multiple, set against 60-70% growth, signals that the market is pricing in a transition from the buildout phase of AI infrastructure to the optimization phase. During the buildout phase, hyperscalers bought whatever Nvidia could produce, regardless of cost. The optimization phase introduces price sensitivity, in-house chip alternatives, and a focus on total cost of ownership. Amazon and Alphabet's willingness to design their own chips reflects a calculation that the long-term savings from vertical integration outweigh the short-term benefits of using Nvidia's best-in-class GPUs. This shift does not mean Nvidia's revenue declines. It means the growth rate decelerates from the triple-digit levels of 2024-2025 to the 60-70% range projected now. The capex increase to $6.1 billion and beyond suggests Nvidia is preparing for this transition by ensuring it has the capacity to serve both hyperscaler and enterprise customers across multiple price points. The broader semiconductor index's 109.8% earnings growth confirms that AI infrastructure spending is becoming a permanent line item in corporate budgets, not a speculative bubble. Siemens' partnership with Xometry to bring AI-native supply chain capabilities to its Xcelerator platform, which serves approximately 70,000 employees in digital industries, illustrates how AI is embedding into industrial procurement and manufacturing workflows. Those enterprise deployments feed incremental but cumulative demand for AI compute, supplementing hyperscaler spending and broadening the total addressable market beyond what a single-customer analysis of Amazon or Alphabet would suggest. AMD's record valuation prices in that broader enterprise opportunity alongside the hyperscaler workloads its MI-series chips are targeting.

The valuation gap between Nvidia's multiple and its growth rate will resolve when the market sees whether the company can maintain its 80%+ gross margins while competing against Amazon and Alphabet's custom silicon. If Nvidia sustains margins, the current multiple will look cheap in hindsight. If margins compress, the multiple was correct and the stock will trade sideways until earnings catch up. The $250 price target from Goldman Sachs implies the bank believes the former outcome is more likely. The next catalyst will be Nvidia's B300 product cycle, which must demonstrate that the company can deliver architectural advantages that hyperscalers cannot replicate with their in-house designs. China's inability to access EUV lithography ensures that the competitive landscape remains a US-centric story, with ASML and ASMI benefiting regardless of which American chipmaker wins the architecture battle. The AI infrastructure buildout has moved from the experimental phase to the industrialization phase, and the companies that control the manufacturing capacity, memory supply, and server integration will capture value even as the chip architecture competition intensifies. Nvidia's $6.1 billion capex plan funds additional fab capacity and packaging for its B300 chips, while the company's operating leverage means that volume growth from expanded capacity sustains margin expansion even where competitive pressures force modest price reductions.

The BossBlog Daily

Essential insights on AI, Finance, and Tech. Delivered every morning. No noise.

Unsubscribe anytime. No spam.

Tools mentioned

AffiliateSelected partner tools related to this topic.

AI Copilot Suite

Content drafting, summarization, and workflow automation.

Try AI Copilot →

AI Model Monitoring

Track model quality, latency, and drift with alerts.

View Monitoring Tool →

Low-fee Global Broker

Multi-market access with transparent pricing.

Open Broker Account →

Some links above are affiliate links. We earn a commission if you sign up through them, at no extra cost to you. Affiliate revenue does not influence editorial coverage. See methodology.