Samsung Electronics crossed the $1 trillion market capitalization threshold on Wednesday, propelled by surging demand for high-bandwidth memory (HBM) chips that power the artificial intelligence data centers operated by hyperscalers like Amazon and Alphabet. The milestone cements Samsung's position as the dominant player in a memory chip market that has become the critical bottleneck in the AI supply chain. The company, alongside rivals SK Hynix and Micron, is racing to convert fabrication lines from consumer chips to HBM production as data center operators struggle to secure enough memory modules to keep their GPU clusters running. The shift marks a fundamental reordering of the semiconductor industry: memory makers, long considered commodity suppliers, now command pricing power and strategic importance once reserved for logic chip designers like Nvidia and AMD. This matters because the HBM shortage is now the single biggest constraint on AI infrastructure buildout, and the companies that control memory supply will dictate the pace and cost of the entire AI revolution. The speed of that reordering has caught many analysts off guard: memory makers historically traded at steep discounts to logic chip designers, but the HBM supply crunch has upended that valuation hierarchy in a matter of months.

HBM market structure drove the $570M valuation

Samsung's ascent to a $1 trillion valuation is not a speculative bubble but a direct reflection of the HBM market's structural transformation. The company, along with SK Hynix and Micron, controls virtually all production of high-bandwidth memory, the specialized DRAM stacked vertically to deliver the extreme bandwidth required by Nvidia's H100 and B200 GPUs. First-quarter earnings reports from hyperscalers revealed a stark reality: the memory chip bottleneck is now the primary constraint on AI data center expansion, not GPU availability as was the case in 2024. Samsung has responded by reallocating a significant portion of its DRAM wafer capacity from consumer applications like smartphones and PCs to HBM production, a shift that carries a substantial opportunity cost. The company's memory division, which generated approximately $57 billion in revenue last year, is now seeing average selling prices for HBM3E modules trade at four to five times the price of conventional DDR5 DRAM. This pricing power directly feeds the bottom line: Goldman Sachs estimates that every percentage point of market share Samsung captures in HBM adds roughly $1.2 billion to annual operating profit. The $1 trillion market cap, which implies a forward price-to-earnings multiple of roughly 18 times, still looks cheap relative to the growth trajectory. Samsung's memory division now accounts for a larger share of total company revenue than at any point in the past decade, underscoring how completely HBM has reshaped its business mix.

HBM pricing power rewrites the P&L

The economics of HBM production create a virtuous cycle that Samsung is now exploiting with precision. Each HBM3E stack requires 12 layers of DRAM dies bonded together through advanced through-silicon via (TSV) technology, a process that yields significantly higher revenue per wafer than conventional memory. Samsung's memory business, which historically operated on thin margins of 10–15%, is now seeing operating margins in the 35–40% range for its HBM product lines, according to Wolfe Research. This margin expansion flows directly into Samsung's consolidated P&L, where the memory segment accounts for roughly 40% of total operating profit. The company's capital expenditure plans reflect this priority: Samsung has committed to spending $45 billion on memory capacity expansion over the next three years, with the majority allocated to HBM-specific fabrication lines at its Pyeongtaek and Taylor, Texas campuses. The cash flow implications are equally significant. Samsung's free cash flow generation has accelerated from $8 billion in 2024 to a projected $22 billion in 2026, giving it the financial firepower to outspend SK Hynix and Micron in the race for next-generation HBM4 technology. This reinvestment cycle creates a moat that commodity memory makers never enjoyed: the high capital intensity of HBM production favors the largest players, and Samsung is the largest. The company's memory division is now generating operating profit margins that rival those of Nvidia's data center segment, a comparison that would have been unthinkable three years ago.

SK Hynix and Micron scramble for share

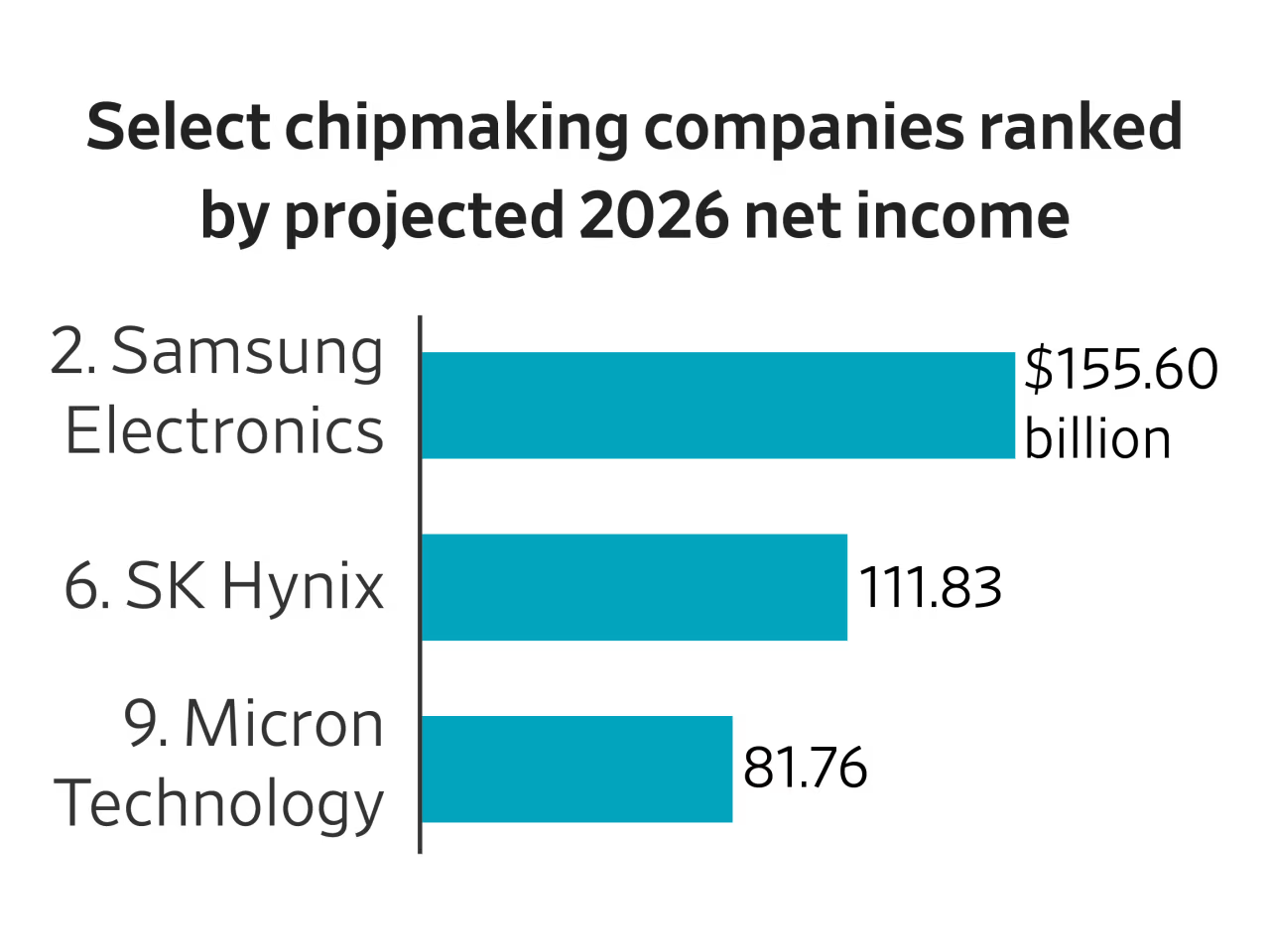

Samsung's trillion-dollar milestone masks an intensely competitive three-way race for HBM dominance. SK Hynix, which pioneered HBM technology and supplies Nvidia's current-generation HBM3 modules, is fighting to maintain its first-mover advantage. The company reported a 42% revenue increase in its memory division last quarter, driven entirely by HBM sales, and is investing $30 billion in a new HBM fabrication complex in Cheongju, South Korea. Micron, the smallest of the three, has surprised the market with a 30%+ stock price surge since late April, reflecting investor belief that its HBM3E qualification with Nvidia will unlock significant revenue. The competitive dynamics are shifting rapidly. Samsung recently secured qualification for its HBM3E product with an unnamed major hyperscaler, a win that analysts at Wedbush estimate could add $3–4 billion in incremental revenue in the second half of 2026. The real battleground, however, is HBM4, the next-generation standard expected to enter production in 2027. Samsung is betting on its hybrid bonding technology, which eliminates the need for microbumps between DRAM layers, to deliver a density advantage over SK Hynix's approach. The winner of the HBM4 race will capture pricing premiums of 50–60% over HBM3E, according to industry estimates, making the stakes existential for all three players. SK Hynix is countering with its own advanced packaging investments, including a dedicated R&D center for next-generation memory stacking technology. The three companies, despite fierce competition, all benefit from the same structural tailwind: AI data center operators cannot build fast enough, and every new GPU cluster installation increases memory demand. Samsung's $1 trillion milestone is therefore not just a company-specific achievement but a clear industry signal that memory chips have permanently ascended to the top of the semiconductor value chain, displacing the logic-chip designers that dominated the previous decade of computing.

The hyperscaler paradox: in-house chips and memory demand

The HBM boom is creating an unexpected dynamic for the hyperscalers that are simultaneously developing their own AI chips. Amazon's Trainium and Alphabet's TPU are designed to reduce dependence on Nvidia GPUs, but both architectures require the same HBM memory modules that are in short supply. This creates a paradox: the more successful hyperscalers are at developing in-house silicon, the more HBM they need to procure. Amazon has already placed multi-year HBM supply agreements with Samsung and SK Hynix to secure memory for its Trainium2 deployments, effectively locking up capacity that might otherwise go to Nvidia's GPU customers. Alphabet's TPU v5, which uses HBM3E, is driving similar procurement strategies. The downstream implications for the semiconductor supply chain are profound. HBM production consumes approximately three times the wafer capacity of conventional DRAM per gigabyte, meaning the memory industry's total silicon demand is growing faster than unit shipments. This is squeezing capacity for other memory-intensive applications, including enterprise SSDs and graphics memory for gaming. The capex boom at Samsung, SK Hynix, and Micron collectively exceeding $80 billion over the next two years is being funded by hyperscaler prepayments and long-term contracts, effectively transferring the capital risk from memory makers to cloud providers. This financial engineering creates a self-reinforcing cycle: hyperscalers fund HBM capacity expansion, which enables more AI infrastructure, which drives more memory demand. The prepayment model has become standard practice, with hyperscalers now routinely committing to three-year supply agreements that include upfront cash payments to secure allocation.

Nvidia's valuation disconnect signals a market shift

The most telling signal of the HBM-driven market transformation is Nvidia's relative underperformance. Despite the AI chip leader commanding a forward EV/EBITDA ratio of just 18.23, a value-play multiple for a company analysts project will grow 60–70% this year and next, its stock has lagged peers Intel and Micron, which are up more than 30% since late April, and AMD, which has gained roughly 20%. Wedbush analyst Matt Bryson notes that the valuation and growth projections simply do not align, suggesting the market is pricing in a structural shift in where value accrues in the AI supply chain. Goldman Sachs analyst James Schneider maintains a $250 12-month price target on Nvidia, implying a 30x P/E multiple, but acknowledges that the memory chip bottleneck and hyperscaler in-house chip progress are creating headwinds. The implication is clear: investors are re-rating the semiconductor industry away from a GPU-centric model toward a memory-centric one. Nvidia's near-monopoly on AI training silicon is no longer sufficient to command the growth premium it once did, because the real constraint on AI infrastructure is no longer compute. It is memory bandwidth. Samsung, SK Hynix, and Micron are the gatekeepers of that bandwidth, and their market caps reflect that newfound leverage. The divergence in valuation multiples between memory makers and GPU designers has widened to levels not seen since the early days of the PC revolution.

The HBM-driven reordering of the semiconductor industry is still in its early innings, and the implications for investors and strategists are profound. Samsung's $1 trillion valuation will likely prove a floor, not a ceiling, as HBM4 production ramps and the company's foundry business begins to benefit from hyperscaler custom chip orders. The real question is whether Nvidia can reclaim its growth premium by vertically integrating into memory, perhaps through acquisition or partnership, or whether the market has permanently revalued the AI supply chain around memory bandwidth as the binding constraint. For now, the momentum is with the memory makers. Hyperscaler capital expenditure, which Goldman Sachs estimates will exceed $300 billion collectively in 2027, will continue to flow disproportionately to HBM capacity expansion, funding a capex cycle that locks in Samsung's advantage for years to come. The winners in the next phase of AI will be those who control the physical infrastructure of memory, not just the logic that processes data through it.

The BossBlog Daily

Essential insights on AI, Finance, and Tech. Delivered every morning. No noise.

Unsubscribe anytime. No spam.

Tools mentioned

AffiliateSelected partner tools related to this topic.

AI Copilot Suite

Content drafting, summarization, and workflow automation.

Try AI Copilot →

AI Model Monitoring

Track model quality, latency, and drift with alerts.

View Monitoring Tool →

Low-fee Global Broker

Multi-market access with transparent pricing.

Open Broker Account →

Some links above are affiliate links. We earn a commission if you sign up through them, at no extra cost to you. Affiliate revenue does not influence editorial coverage. See methodology.