Samsung became the second Asian company to reach a $1 trillion market value, riding an AI-driven boom in high-bandwidth memory (HBM) chips that has reshuffled the semiconductor sector’s pecking order. The South Korean giant’s milestone, fueled by insatiable demand from hyperscaler data centers, stands in stark contrast to the fate of Nvidia, the GPU leader that has been left behind by its own chip-making peers despite remaining central to the AI revolution. While Samsung, SK Hynix, and Micron have all surged, Intel and Micron are up more than 30% since late April. Nvidia’s stock has stalled, with its forward EV/EBITDA ratio sitting at 18.23 and analysts projecting 60-70% growth that has yet to materialize in share price performance. The divergence signals a structural shift in how the AI chip market rewards different layers of the supply chain, and it matters now because the bottleneck is no longer just compute. It is memory, and the companies that control it are capturing a larger share of the value.

Samsung’s $1 trillion milestone from HBM demand

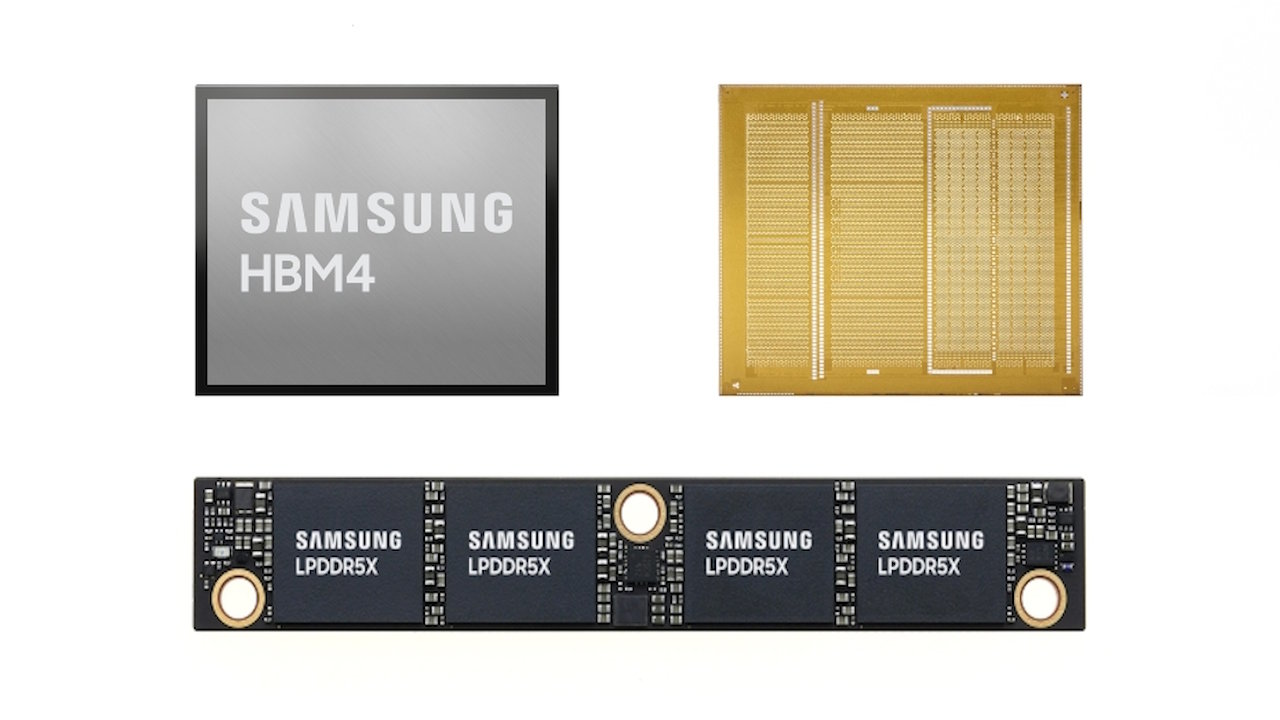

Samsung’s ascent to $1 trillion market capitalization is a direct consequence of the AI boom’s insatiable appetite for HBM chips, the specialized memory modules that sit alongside Nvidia’s GPUs in AI training clusters. The three largest memory chip makers, Samsung, SK Hynix, and Micron, are all struggling to meet demand, forcing them to shift investment from consumer chip production to HBM manufacturing. Samsung’s market value crossed the trillion-dollar threshold as the company ramped up production of its fifth-generation HBM3E chips, which are now being qualified by major hyperscaler customers. The company is competing aggressively with SK Hynix, which has been the early leader in HBM supply to Nvidia, but Samsung’s sheer scale in overall memory production, spanning DRAM, NAND, and now advanced packaging, gives it a manufacturing cost advantage that analysts at Goldman Sachs have flagged as a key differentiator. The $1 trillion valuation makes Samsung the second Asian company to reach that milestone, and it reflects a market that is pricing in sustained HBM revenue growth for years to come. The S&P 500 semiconductor index is expected to report 109.8% earnings growth for the first quarter, and memory makers are the primary beneficiaries of that expansion. Samsung’s memory division alone now accounts for a larger share of the company’s total valuation than its mobile or consumer electronics segments, a reversal from just two years ago when memory was considered a cyclical drag.

How HBM pricing power drives Samsung’s P&L

The financial mechanics of Samsung’s rally are rooted in HBM pricing dynamics that have transformed the company’s memory division from a cyclical commodity business into a high-margin growth engine. HBM chips command a significant premium over standard DRAM, typically three to five times the price per gigabit, because they require advanced through-silicon via (TSV) packaging and tighter binning tolerances. Samsung’s memory segment, which had been under pressure from consumer chip oversupply in 2023, has seen its operating margins expand dramatically as HBM now accounts for an estimated 25-30% of total DRAM bit shipments. The company’s shift in capital expenditure toward HBM production lines, which require specialized equipment for stacking and bonding, has created a self-reinforcing cycle: higher HBM output drives better utilization of advanced packaging facilities, which in turn lowers unit costs and widens margins. Wedbush analyst Matt Bryson has noted that Samsung’s HBM revenue could exceed $40 billion in 2026 if current pricing trends hold, a figure that would represent roughly 15% of the company’s total projected revenue. This margin expansion is the primary reason Samsung’s market value crossed $1 trillion, as investors are now valuing the memory business on a multiple closer to semiconductor design companies than traditional commodity chip makers. The shift is visible in Samsung’s quarterly earnings, where memory operating profit has more than tripled year-over-year, outpacing every other division in the conglomerate.

Memory makers gain while GPU leader stalls

The chip sector’s divergence is most visible in the relative performance of memory makers versus GPU designers. While Samsung, SK Hynix, and Micron have all seen their stocks rally sharply, Intel and Micron are up more than 30% since April 27. Nvidia has underperformed despite reporting revenue growth that would be the envy of any other company. The reason is twofold: hyperscalers are developing in-house chips that reduce their dependence on Nvidia’s GPUs, and the memory bottleneck is shifting value to HBM suppliers. Alphabet’s TPUs and Amazon’s Trainium chips are now being deployed in production AI workloads, and while they do not match Nvidia’s raw performance for training large models, they offer superior total cost of ownership for inference tasks. Goldman Sachs analyst James Schneider has pointed out that hyperscaler capital expenditure remains a positive driver for the entire chip ecosystem, but the spending is increasingly flowing to memory and networking components rather than just GPUs. Nvidia’s forward EV/EBITDA ratio of 18.23 is actually lower than some memory makers when adjusted for growth, and Goldman’s 12-month price target of $250 implies a 30x P/E multiple that the market has been reluctant to grant. The competitive dynamic is creating a two-tier market where memory companies capture the scarcity premium while GPU vendors face margin compression from in-house alternatives. This structural shift is reflected in the relative valuation multiples: memory makers now trade at forward P/E ratios that are within 10-15% of Nvidia’s, a compression that would have been unthinkable two years ago.

Downstream effects on hyperscalers, servers, and enterprise buyers

The memory boom is rippling through the entire AI infrastructure supply chain, creating winners and losers among server makers and enterprise buyers. Super Micro surged 14.3% after forecasting fourth-quarter revenue above expectations, driven by strong demand for AI servers that pack HBM-equipped GPUs and memory modules. The company, along with Dell and Hewlett Packard Enterprise, is benefiting from hyperscalers’ rush to build out AI clusters that require massive amounts of HBM. A single Nvidia B300 server can contain over 1.5 terabytes of HBM3E memory. Super Micro CEO Charles Liang has been aggressively ramping production capacity despite legal clouds from an ongoing SEC investigation, betting that AI server demand will outweigh regulatory risks. On the enterprise side, the HBM shortage is forcing companies to lock in multi-year supply agreements with memory makers, effectively pre-paying for capacity that will not come online until 2027. This dynamic is creating a bifurcated market where large hyperscalers with balance sheet strength can secure HBM allocations while smaller enterprises face extended lead times. The capex implications are significant: Samsung and SK Hynix are expected to invest a combined $50 billion in HBM production capacity over the next three years, a figure that will flow through to equipment suppliers and packaging subcontractors. Server makers are already reporting that HBM availability, not GPU supply, is the primary constraint on their delivery timelines. Equipment suppliers to the memory fabs, including ASML, Applied Materials, and Tokyo Electron, are seeing order books expand well into 2028 as Samsung and SK Hynix commit to accelerated buildout of HBM production lines. For enterprise IT buyers, the practical consequence is that AI cluster procurement timelines have lengthened from weeks to quarters, and the companies that locked in HBM supply agreements in late 2025 now hold a measurable competitive advantage over those that waited. The memory constraint has effectively become a strategic moat: organizations that secured supply early are deploying inference capacity faster and at lower per-token cost than latecomers scrambling to source HBM on the spot market.

What the Samsung-Nvidia divergence signals about AI’s next phase

The market’s divergent treatment of Samsung and Nvidia is a leading indicator that the AI chip industry is entering a new phase where memory, not just compute, determines the pace of innovation. The HBM shortage has become the single biggest bottleneck in AI infrastructure deployment, and the companies that control memory production are now pricing that scarcity into their valuations. This shift has profound implications for how the industry will allocate capital and talent going forward. WISeKey, a Swiss cybersecurity company, is already betting on the next frontier: post-quantum and space-based security. CEO Carlos Moreira has outlined plans for a Quantum Spatial Orbital Cloud (QSOC) that would use a constellation of 100 satellites, 21 have been launched and 14 are operational, to provide quantum-secure key distribution from orbit. WISeKey reported $19.3 million in revenue for 2025, up 62% year-over-year, with its SEALSQ subsidiary growing 66% and holding over $535 million in cash as of April 2026. The company’s pivot from terrestrial cybersecurity to space-based quantum infrastructure mirrors the broader industry trend: as AI workloads become more distributed and sensitive, the security layer is moving from software to hardware to orbital architecture. The message from the chip sector is clear: the next trillion-dollar opportunity will not be in designing faster processors, but in building the memory, packaging, and security infrastructure that makes them useful.

The Samsung-Nvidia divergence is not a one-quarter anomaly but a structural repricing of where value is created in the AI chip stack. Memory makers have demonstrated that scarcity pricing can generate margins that rival GPU design houses, and hyperscalers are responding by locking in supply and developing their own alternatives. Nvidia will remain a dominant force in AI training for the foreseeable future, but its monopoly on value capture is breaking. The companies that own the physical layers, memory fabs, advanced packaging lines, and satellite constellations, are the ones that will define the next cycle of AI infrastructure investment. Investors who focus only on the GPU layer are missing the bigger story: the AI boom is becoming a memory boom, and the winners are the companies that can build, not just design.

The BossBlog Daily

Essential insights on AI, Finance, and Tech. Delivered every morning. No noise.

Unsubscribe anytime. No spam.

Tools mentioned

AffiliateSelected partner tools related to this topic.

AI Copilot Suite

Content drafting, summarization, and workflow automation.

Try AI Copilot →

AI Model Monitoring

Track model quality, latency, and drift with alerts.

View Monitoring Tool →

Low-fee Global Broker

Multi-market access with transparent pricing.

Open Broker Account →

Some links above are affiliate links. We earn a commission if you sign up through them, at no extra cost to you. Affiliate revenue does not influence editorial coverage. See methodology.