David Silver spent the better part of a decade at DeepMind teaching machines to beat grandmasters and then master every Atari game in existence, without being shown how humans play. Now he is raising $1.1 billion to see how far that same idea can go when applied to the entire frontier of intelligence itself.

Ineffable Intelligence, the London-based startup Silver co-founded in late 2025, announced on April 27 a seed round of $1.1 billion at a post-money valuation of $5.1 billion, making it the largest seed funding in European history by a material margin. The round was co-led by Sequoia Capital and Lightspeed Venture Partners, with Nvidia, Google, Index Ventures, DST Global, and the UK's new Sovereign AI Fund all writing checks. The timing lands against a backdrop that looks increasingly like a mass exodus from the major AI labs: within days, rival startup Recursive Superintelligence confirmed raising at least $500 million at a $4 billion valuation from GV (Google Ventures) and Nvidia, while CNBC reported that VCs have already funneled $18.8 billion into AI startups founded since the start of 2025, already on pace to exceed last year's record $27.9 billion flowing into companies launched in 2024.

The acceleration signals that the frontier is fragmenting. Google DeepMind, Meta, and OpenAI are still writing the largest checks in AI history, but a cohort of the researchers who built those organisations' most distinctive capabilities are now betting they can do more from the outside.

Reinforcement Learning Without a Human Blueprint

Ineffable's central claim is that the dominant paradigm (train on human-generated text and code, fine-tune on human feedback, repeat) has a structural ceiling. Silver, who led DeepMind's reinforcement learning research and was lead scientist on AlphaGo, AlphaZero, and Gemini, argues the ceiling becomes visible when you ask systems to produce genuinely novel reasoning rather than sophisticated interpolation across what humans already know.

The proposed alternative is a "superlearner" architecture in which the model learns through trial, error, and reward signal rather than through supervised imitation. AlphaZero never watched a grandmaster's game; it played millions against itself, discovered the same openings human players refined over centuries, and then went past them. Silver believes the same strategy, applied at scale with modern compute, can produce systems capable of compressing decades of scientific progress into months.

What Ineffable will not say publicly is what specific capabilities it expects to demonstrate, or on what timeline. The $1.1 billion buys runway and compute; Nvidia's participation almost certainly involves forward allocation of Blackwell GPU clusters, not a shipped product. The company has not published research or an arXiv preprint. Silver's track record is the entire evidence base that investors are pricing at $5.1 billion.

Sequoia, Nvidia, and the Strategic Logic of Backing Both Sides

The investor list tells a more precise story than the headline valuation. Sequoia and Lightspeed are making a straightforward bet that the post-LLM paradigm produces a category-defining company, and that Silver has higher-than-anyone-else probability of building it given his prior output. Google and Nvidia's presence is more layered.

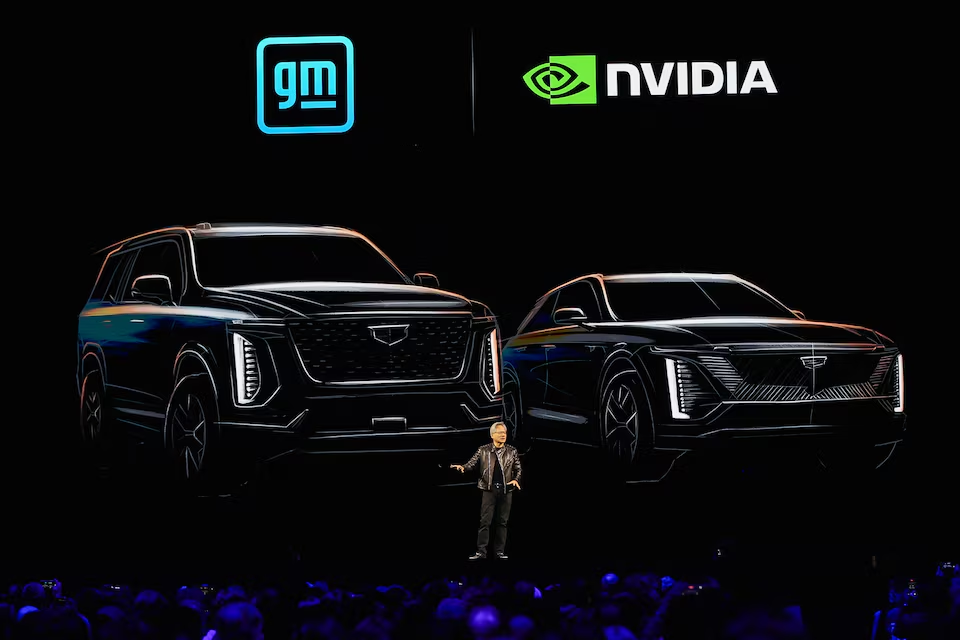

For Nvidia, co-investing in Ineffable and Recursive Superintelligence simultaneously follows its standard playbook of purchasing exposure to every credible approach to frontier AI. If either company's architecture requires novel compute, and reinforcement-learning-at-scale almost certainly generates more unique GPU demand patterns than batch inference, Nvidia wants to be close enough to the roadmap to shape it. The participation also carries an implicit endorsement that the technical agenda is real rather than vaporware, which matters for recruiting.

Google's position is more uncomfortable. The company's own DeepMind unit invented the reinforcement-learning techniques Silver is now commercialising without them. Investing in Ineffable is partly insurance (if RL-native architectures leapfrog transformers, Google has a stake in the outcome) and partly talent diplomacy. Silver remains on professional terms with Alphabet leadership, and the relationship presumably makes future collaborations easier to initiate than if DeepMind had been purely adversarial about his departure.

The UK Sovereign AI Fund's participation is smaller in dollar terms but significant as a policy instrument: the British government has decided that backing the country's most credible superintelligence company is part of its national AI strategy, a posture Prime Minister Liz Kendall's administration formalised earlier in 2026.

Recursive Superintelligence and the Parallel Bet on Self-Improvement

Ineffable is not the only startup in this race raising nine figures from a standing start. Recursive Superintelligence, founded in December 2025 by Richard Socher (formerly Salesforce's chief scientist), Tim Rocktäschel (a former principal scientist at Google DeepMind), and researchers from OpenAI, Meta, and Google, pulled in at least $500 million at a $4 billion pre-money valuation led by GV with Nvidia as a strategic participant.

The company, which had 20 employees at the time of the raise, is pursuing a distinct technical vision: automating the entire pipeline of frontier AI development, covering evaluation, data selection, training, post-training, and research direction, so that the system continuously improves without human intervention between iterations. Where Ineffable wants a model that learns skills from scratch through RL, Recursive Superintelligence wants a machine that runs the lab.

The distinction matters commercially. If Socher and Rocktäschel succeed, the output is a meta-capability capable of replacing large fractions of an AI research organisation. That framing simultaneously explains the $4 billion pre-money (the addressable market is every dollar currently spent on AI R&D) and the unease it creates among the same investors who are funding it. GV is, after all, an Alphabet corporate venture arm, and Recursive Superintelligence's ambition is to make human researchers at organisations like DeepMind dramatically less necessary.

Both companies plan public launches around mid-2026. Neither has a revenue-generating product today.

Why $18.8 Billion Is Chasing Four-Month-Old Companies

The capital flowing into Ineffable and Recursive Superintelligence is a symptom of a broader market dynamic that has been building since late 2025. CNBC reported that venture capital has funneled $18.8 billion into AI startups founded since the start of 2025, tracking ahead of the $27.9 billion invested in 2024-vintage companies during all of 2025. Former Meta chief AI scientist Yann LeCun's AMI Labs raised $1.03 billion in March at a $3.5 billion pre-money valuation to develop world-model architectures, another departure from the transformer-on-human-text paradigm.

The structural logic is that the major labs (Meta, Google DeepMind, OpenAI, Anthropic) have grown large enough that entire research programs get deprioritised in favour of the areas most directly tied to near-term revenue. Novel architectures, interpretability, and vertical models are not producing quarterly results at hyperscaler scale, which creates an opening. Researchers who believe those deprioritised bets will prove decisive are finding external capital readily available at valuations that would have been unimaginable before 2024.

The risk embedded in this dynamic is symmetric. If post-LLM architectures do not prove superior to scaled transformers within a two-to-three-year window, many of these companies will have raised against an implied product they cannot ship. The seed valuations already price in a significant probability that at least one will, which is why Sequoia and Lightspeed can write billion-dollar seed checks without apology: the expected value calculation clears even at low individual hit rates given the magnitude of the potential outcome.

Nvidia's Infrastructure Advantage in a Fragmented Frontier

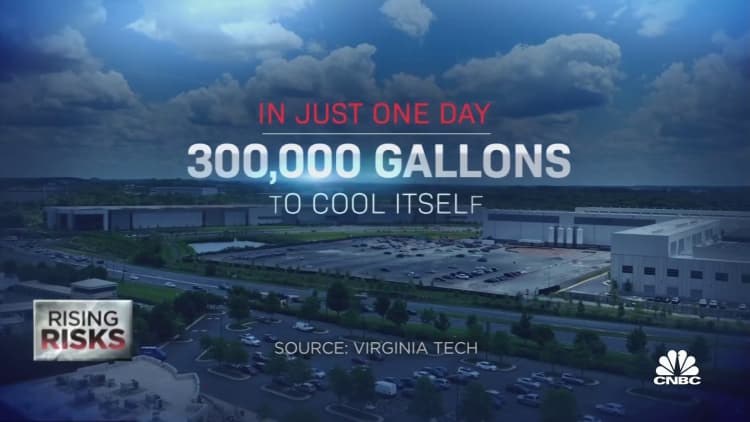

The one throughline connecting every company named above is Nvidia. Silver's RL-at-scale approach requires massive parallel environment simulation, GPU-bound compute that runs differently from standard forward-pass inference but still depends on the CUDA ecosystem. Socher's automated-lab vision needs accelerated training loops that benefit from Blackwell's per-GPU memory bandwidth improvements over Hopper. LeCun's world models are expensive to train by design.

Nvidia's co-investment in multiple competing architectures is not hedging in the defensive sense; it is expanding the universe of use cases that require GPU clusters to build. If reinforcement learning or self-improvement architectures reach the scale that Silver and Socher believe they can, total GPU demand per frontier model will exceed the already steep requirements of GPT-class transformers. The company's Blackwell roadmap, which extends through 2027, is calibrated to serve exactly that scenario.

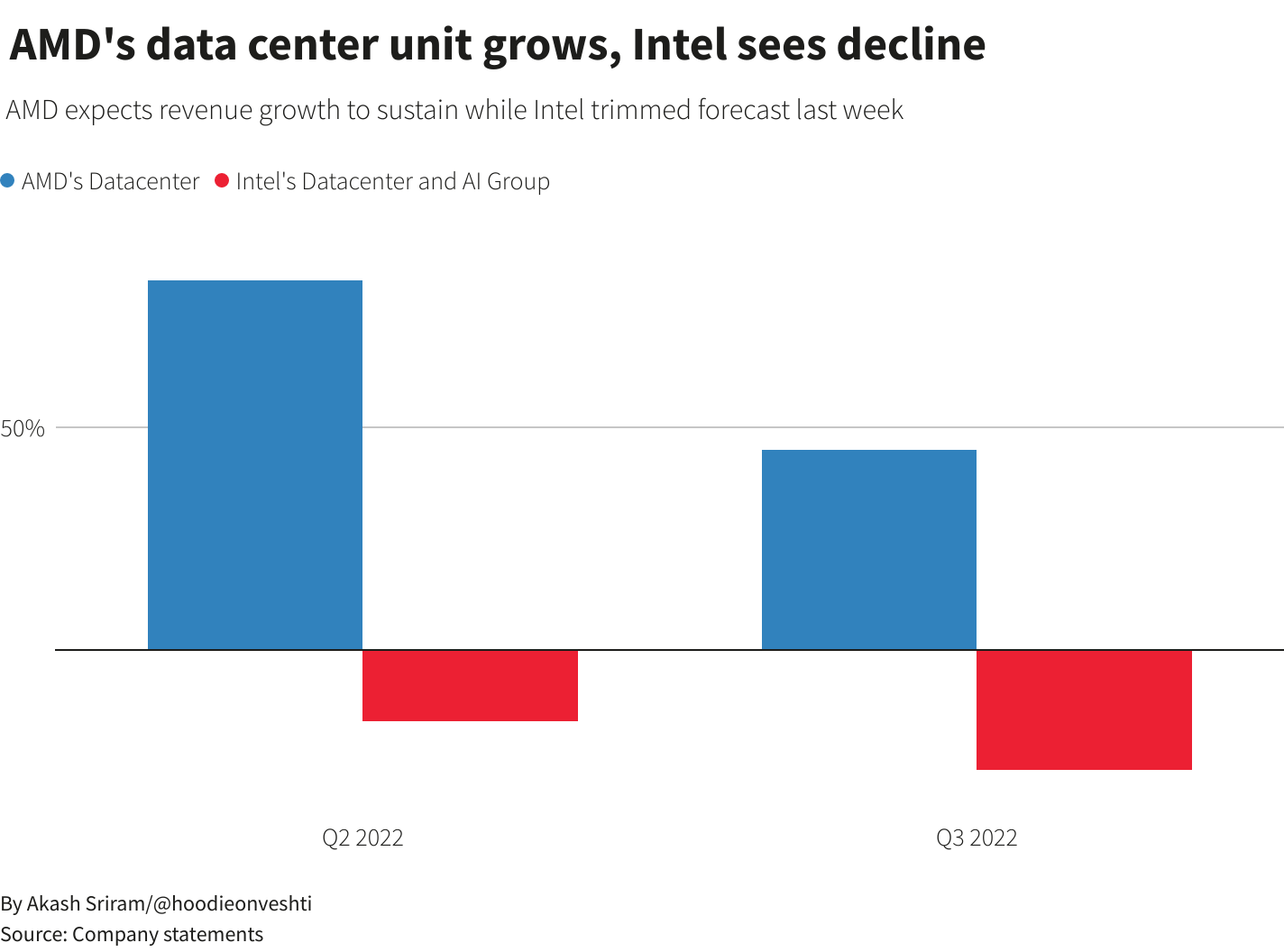

AMD and Intel remain structurally disadvantaged in this competition: their software ecosystems for RL simulation environments are immature relative to CUDA, and both companies lack the cloud partnership density that Nvidia has built with AWS, Azure, and Google Cloud. Custom silicon from Anthropic, Google (TPU), and Meta (MTIA) is capable for inference but generally lags on novel training workloads where the computation graph is less predictable.

The Sovereignty Signal and What Comes After Transformers

The UK government's decision to anchor its Sovereign AI Fund to Ineffable rather than to an established domestic player or an Anthropic subsidiary reflects a judgment that the next architectural wave will not emerge from the current large labs. That bet carries real stakes: the British Business Bank and the Sovereign AI Fund are ultimately allocating public capital to a six-month-old company whose only demonstrated asset is its founder's prior output at a different organisation.

If Silver and his team ship a working system that genuinely operates at superhuman capability on a bounded but meaningful task, the equivalent of AlphaZero's Go performance but in a domain that matters economically, the $1.1 billion will look like the cheapest institutional bet of the decade. If the RL-without-human-data thesis hits compute or sample-efficiency walls that the existing literature does not predict, Ineffable's $5.1 billion valuation will need to compress before a Series A is viable.

The broader industry is watching for a simpler signal: a research paper. Silver has not published from Ineffable yet. The moment he does, the technical community will form an independent view of whether the approach is plausible at scale, and the investor narrative will either harden into consensus or fragment into competing read-throughs. Until that paper exists, the $1.1 billion is essentially a wager on the judgment of the people who co-wrote AlphaZero — a bet that carries historical justification, but no guarantee.

The BossBlog Daily

Essential insights on AI, Finance, and Tech. Delivered every morning. No noise.

Unsubscribe anytime. No spam.

Tools mentioned

AffiliateSelected partner tools related to this topic.

AI Copilot Suite

Content drafting, summarization, and workflow automation.

Try AI Copilot →

AI Model Monitoring

Track model quality, latency, and drift with alerts.

View Monitoring Tool →

Some links above are affiliate links. We earn a commission if you sign up through them, at no extra cost to you. Affiliate revenue does not influence editorial coverage. See methodology.