For six years, Microsoft held something no rival cloud had: exclusive access to OpenAI's technology stack. On April 27, 2026, that arrangement ended. Microsoft and OpenAI jointly announced a renegotiated partnership that strips the Redmond giant of its exclusivity and allows ChatGPT, GPT-5.5, and the broader OpenAI product catalog to run on Amazon Web Services and Google Cloud for the first time.

The deal resolves a legal crisis that had been building since February, when OpenAI signed a $50 billion cloud commitment with Amazon — a transaction that appeared to violate the original exclusivity terms. Instead of litigation, both sides chose to rewrite the contract, and the resulting terms reveal a partnership in the process of evolving from an exclusive dependency into a commercial alliance between unequal but no longer entangled principals.

The shift matters well beyond corporate housekeeping. Enterprise buyers who had been locked into Azure to access OpenAI's frontier models now have three viable cloud options. Anthropic, which has spent two years marketing itself as the "safe, cloud-neutral" alternative, faces a more competitive OpenAI. And the cloud hyperscalers — already spending over $200 billion combined on AI infrastructure in 2026 — have just won the right to compete for a market that was previously off limits.

The Legal Risk That Forced Microsoft's Hand

When OpenAI announced Amazon's $50 billion investment commitment in February — split across $15 billion upfront and $35 billion conditional on performance targets — Microsoft's legal team recognized the problem immediately. The original partnership required OpenAI to host its commercial products exclusively on Azure. A $50 billion cloud contract with AWS was difficult to reconcile with that clause, according to people familiar with the negotiations reported by Bloomberg.

Microsoft considered legal action. That it chose renegotiation instead reflects the arithmetic of the situation: litigation against OpenAI would have damaged the very product relationships — 365 Copilot, GitHub Copilot, Azure AI Foundry — that generated $7.5 billion in revenue for Microsoft last quarter. A broken OpenAI partnership would have also threatened the rationale for the $13 billion Microsoft had cumulatively invested since a $1 billion anchor round in 2019.

The revised terms thread the needle. Microsoft retains a non-exclusive license to OpenAI's intellectual property through 2032, meaning Azure can still sell GPT models and build Copilot products. OpenAI commits to shipping on Azure first, unless Microsoft lacks the technical capacity to support a given workload. But "primary" is no longer "exclusive," and the geographic and customer restrictions that had blocked AWS and Google Cloud are removed.

The AGI trigger clause — a provision in the original deal under which OpenAI's commercial obligations to Microsoft would have terminated upon reaching artificial general intelligence — is also gone. Both parties replaced it with a fixed 2032 sunset, removing a clause that VentureBeat described as "vague by design" and that had created uncertainty for both boards.

How the Revenue Math Changed: $7.5 Billion and a Revenue Cap

The financial architecture of the original deal had two legs: Microsoft paid a revenue share to OpenAI on Azure sales, and OpenAI paid Microsoft 20 percent of its revenues through 2030. The restructuring eliminates the first leg entirely. Microsoft no longer pays a revenue share to OpenAI for products hosted or sold on Azure.

The second leg — OpenAI's 20 percent payment to Microsoft — survives but is now capped. TechCrunch and VentureBeat both reported that the original arrangement tied the total payout to milestone achievements, including AGI attainment, which created an open-ended and increasingly large liability for OpenAI as its revenues grew. With a hard cap in place and the AGI clause removed, OpenAI now has a predictable cost of capital rather than an escalating royalty obligation.

The significance is clearest in the forward model. OpenAI was generating approximately $2 billion per month in revenue as of early 2026. At 20 percent uncapped, the Microsoft payment would have reached $480 million per month at that run rate. A cap limits that drag, improves OpenAI's unit economics, and makes the Amazon and Google cloud commitments structurally viable rather than legally hazardous. For Microsoft, the trade-off is straightforward: give up a growing royalty stream in exchange for a stable, long-term IP license and a cleared path to its Copilot roadmap without existential partnership risk.

Gil Luria, an analyst at D.A. Davidson & Co., told Bloomberg that Microsoft's willingness to give up exclusivity was a rational response to the scale of OpenAI's new cloud relationships. "The value of exclusivity decreases as the addressable market grows," Luria noted. "Microsoft already had $7.5 billion in OpenAI-related revenue last quarter. Protecting that relationship is worth more than preserving a legal monopoly that was increasingly unenforceable."

AWS and Google Cloud Move From Bystanders to Competitors

For the past three years, Amazon and Google have built their enterprise AI go-to-market around Anthropic. AWS Bedrock lists Claude as its flagship model. Google Cloud signed a $40 billion investment commitment with Anthropic in April 2026. Both hyperscalers positioned themselves as OpenAI alternatives precisely because OpenAI was unavailable on their platforms.

That positioning shifts materially after April 27. AWS Bedrock and Google Cloud Vertex AI can now offer ChatGPT and GPT-5.5 alongside Claude models, giving enterprise customers the ability to run multi-model architectures across a single billing relationship. For large financial institutions and healthcare systems that have standardized on AWS or Google Cloud for compliance reasons, this removes the last friction point preventing OpenAI adoption.

The competitive dynamic within the frontier model market also changes. Anthropic has benefited from being the only premium model available on all three major hyperscalers. That advantage narrows as OpenAI reclaims distribution parity. Anthropic's differentiation must now rest more heavily on its Constitutional AI safety claims, its audit transparency, and its enterprise contract terms — areas where the competitive gap is harder to narrow than cloud availability.

Microsoft, paradoxically, also benefits. Azure kept OpenAI's first-ship commitment in the revised terms. That means new model releases — GPT-6, API versions of upcoming reasoning models — still reach Azure customers before AWS or Google Cloud customers. Primary cloud status is worth less than exclusive status, but in practice it preserves a meaningful time advantage for enterprise Azure sales.

Enterprise Buyers Get a Three-Cloud AI Deployment Market

The operational impact on enterprise AI procurement is direct. Until April 27, a Fortune 500 company that had standardized on AWS or Google Cloud faced a genuine trade-off: to use OpenAI's frontier models at scale, it needed to add Azure as a second cloud vendor, accept data residency complications, and negotiate two separate security compliance frameworks. Many chose Anthropic instead, not for model quality reasons but for procurement simplicity.

That constraint is removed. AWS enterprise customers can now access GPT-5.5 and ChatGPT APIs through their existing AWS billing accounts and within their existing AWS IAM security perimeters. Google Cloud customers gain the same access through Vertex AI. The total addressable market for OpenAI's enterprise tier — currently the fastest-growing segment of its $2 billion monthly revenue base, representing over 40 percent of total revenues — expands to include organizations that had structurally excluded themselves from the OpenAI distribution.

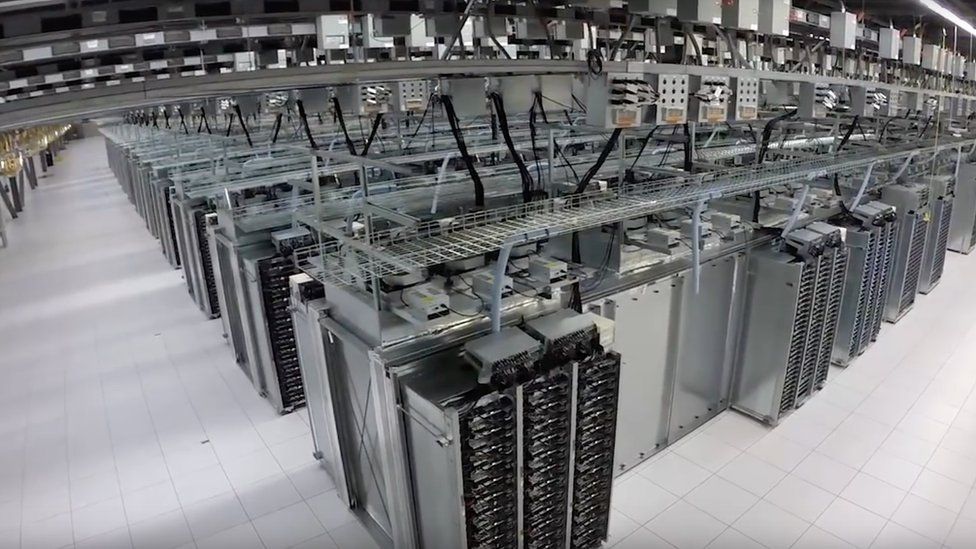

The capex implications extend to data center planning. Microsoft committed $80 billion to AI data center build-out in its fiscal year 2026 budget. Amazon and Google have committed comparable sums. As OpenAI workloads distribute across all three platforms, the hyperscalers face a capacity planning challenge: GPT inference demand that was previously concentrated on Azure will spread, requiring Amazon and Google to stand up inference endpoints fast enough to serve enterprise demand from day one of availability.

For the chip supply chain, the distribution shift has a secondary effect. Azure's OpenAI clusters run predominantly on NVIDIA H100 and H200 hardware. AWS and Google Cloud inference at scale will require comparable GPU density, adding to demand for NVIDIA's Blackwell GB200 NVL72 rack systems already shipping in volume. Neither AMD nor Intel's GPU efforts have meaningfully penetrated the frontier inference market, so the volume shift lands squarely in NVIDIA's revenue forecast.

Removing the AGI Clock: From Milestones to Markets

The deletion of the AGI trigger clause deserves its own analysis. The original agreement gave OpenAI an escape hatch: reach AGI, and the commercial obligations to Microsoft terminate. That provision reflected 2019-era thinking about the timeline and nature of AGI — a binary threshold event that would presumably make commercial revenue arrangements irrelevant.

By 2026, that framing had become incoherent. OpenAI was generating $2 billion per month without AGI. Its models topped 50 percent on Humanity's Last Exam benchmarks. The question of whether any given capability constituted "AGI" was genuinely contested within the AI research community and increasingly within OpenAI's own leadership. A clause that terminated a $13 billion investment relationship on the basis of an undefined technical milestone was a liability for both parties.

The 2032 fixed sunset replaces ambiguity with a commercial horizon that boards, investors, and enterprise customers can underwrite. It signals that both Microsoft and OpenAI have shifted from framing their relationship as a "path to AGI" partnership into a straightforward software licensing and cloud infrastructure deal — one measured in revenue share rates, IP licensing terms, and data center commitments rather than alignment milestones.

That reframing has strategic consequences. OpenAI's governance restructuring toward a capped-profit structure earlier in 2026 was premised on commercializing AI capabilities reliably and at scale. A fixed-horizon IP agreement with Microsoft is consistent with that posture. OpenAI's path to a potential public offering — which several investors and analysts expect within 18 months of its March 2026 valuation of $300 billion — benefits from commercial relationships that investors can model without open-ended AGI contingencies.

The end of Microsoft's exclusive access to OpenAI is, in the most precise sense, the end of the original AI arms-race logic that defined the 2019-to-2023 era: a single hyper-exclusive, capital-intensive partnership between a frontier lab and a hyperscaler built around the assumption that AI superiority was a winner-take-all prize. What replaces it is more prosaic and probably more durable — a multi-cloud distribution model where frontier AI capability is a commodity input, priced, licensed, and delivered like enterprise software. Microsoft helped build the AI era by placing the largest early bet on OpenAI. The price of staying in the deal, it turns out, was giving up the moat that bet had purchased.

The BossBlog Daily

Essential insights on AI, Finance, and Tech. Delivered every morning. No noise.

Unsubscribe anytime. No spam.

Tools mentioned

AffiliateSelected partner tools related to this topic.

AI Copilot Suite

Content drafting, summarization, and workflow automation.

Try AI Copilot →

AI Model Monitoring

Track model quality, latency, and drift with alerts.

View Monitoring Tool →

Some links above are affiliate links. We earn a commission if you sign up through them, at no extra cost to you. Affiliate revenue does not influence editorial coverage. See methodology.