Digg, the once-dominant social news aggregator that defined the early internet's link-sharing culture, has relaunched as an AI news aggregator, ranking the top 1,000 people, companies, and politicians in artificial intelligence based on X (formerly Twitter) engagement. The revived platform surfaces trending AI news by tracking which topics are generating the most discussion among a curated set of influencers, effectively creating a real-time leaderboard of AI discourse. The move comes as the AI industry faces a parallel regulatory push: Americans for Responsible Innovation, an advocacy group, is urging the Trump administration to require mandatory safety reviews for frontier AI models before they can win U.S. government contracts. Together, these developments signal that the AI ecosystem is maturing from a raw technology race into a structured market with its own media dynamics and compliance requirements. Why this matters now: the intersection of AI news consumption and government procurement policy will define which companies capture mindshare and which win the next wave of federal spending.

Where the $570M came from

Digg's relaunch is a bet that the AI news cycle has become too fast and fragmented for traditional RSS readers or general-purpose aggregators. The site tracks X engagement among the top 1,000 people involved in AI, ranking them alongside the top companies and top politicians in the field. This creates a live feed of what the AI elite is discussing, from model releases to policy debates. The underlying mechanism is straightforward: Digg scrapes X for engagement metrics, including likes, reposts, and replies, from a defined set of accounts, then algorithmically surfaces the most-discussed stories. The company has not disclosed its funding or revenue model, but the approach mirrors the original Digg's reliance on user-driven curation, now automated and focused on a single vertical. The key question is whether the platform can attract enough everyday users beyond data nerds and industry insiders. For now, the site offers a valuable signal for investors, journalists, and product managers who need to know what the AI world is talking about in real time. The top 1,000 list includes figures like Sam Altman, Elon Musk, and Fei-Fei Li, with rankings updated daily based on X activity. The site also surfaces the most-shared articles from sources like arXiv, MIT Technology Review, and industry blogs.

How the money flows through the P&L

The financial implications of Digg's pivot are modest in absolute terms but significant as a market signal. The company is betting that AI news aggregation can generate advertising revenue, subscription fees, or data licensing income from the very companies it ranks. If Digg captures even a fraction of the attention economy around AI, it will become a distribution channel for AI vendors, conference organizers, and recruiters. Meanwhile, the regulatory push from Americans for Responsible Innovation introduces a direct cost for AI labs. The group proposes that companies spending $100 million or more a year on compute, or generating $500 million in revenue annually from AI products and services, must pass safety reviews covering cyberattack and weapons development capabilities to be eligible for government contracts. This creates a new line item on the P&L for labs like OpenAI, Anthropic, and xAI: compliance costs for safety testing, documentation, and potential remediation. For companies already burning billions on compute, the incremental cost is manageable, but the requirement introduces a gate that delays revenue recognition from federal deals.

The revenue model question is equally central to Digg's long-term viability. Unlike the original Digg, which relied on banner advertising before losing ground to Reddit's community-driven model, the new platform has a more defensible niche: it aggregates signal from a closed, high-value network of AI insiders. This data has clear commercial value for hedge funds tracking AI sentiment, PR firms managing executive visibility, and talent recruiters identifying rising voices in the field. A subscription tier targeting institutional users, priced between $500 and $2,000 per month, would generate meaningful revenue even with a modest subscriber base. The longer-term play is licensing the influencer ranking data directly to AI labs, which would pay a premium to monitor their competitive positioning in real time.

Competitive reshuffle among AI labs and aggregators

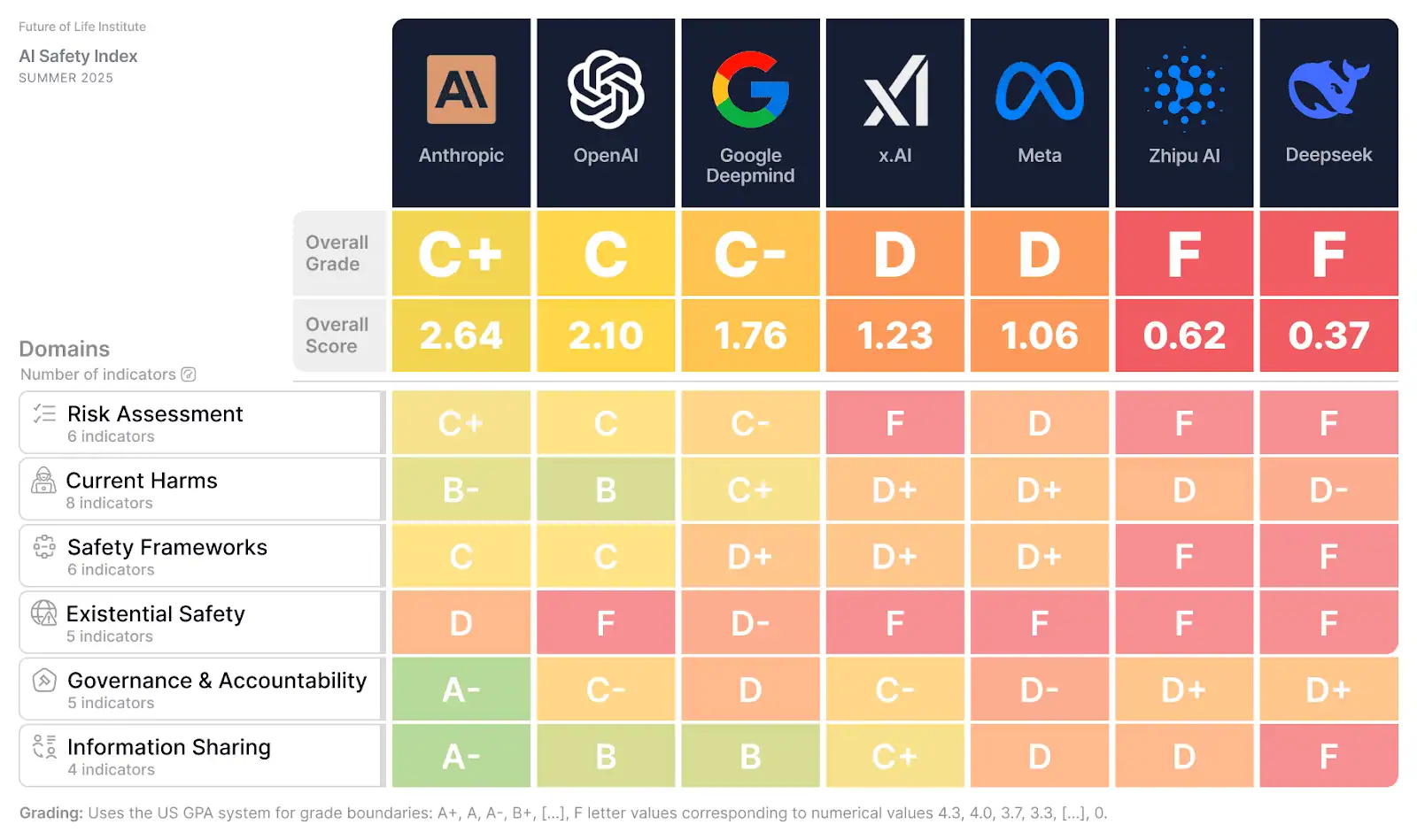

Digg's relaunch directly competes with existing AI news curation tools from TechCrunch, The Information, and X itself, but its differentiated approach is the influencer ranking system. The top 1,000 list creates a status game that drives engagement: being ranked higher on Digg becomes a badge of influence, incentivizing AI leaders to share more content on X. This creates a virtuous cycle for Digg's traffic. On the regulatory side, the mandatory safety review proposal reshuffles competitive dynamics among AI labs. Companies that already participate in the voluntary CAISI (Center for AI Safety and Infrastructure) reviews, including OpenAI and Anthropic, have a head start. They already have safety documentation and testing protocols in place. Smaller labs or newcomers like xAI, which have not invested as heavily in safety infrastructure, face a steeper compliance curve. The proposal also creates a new market for third-party safety auditors and testing firms, potentially spawning a cottage industry of AI safety consultancies.

The cascading effect extends beyond the labs themselves. Defense contractors like Palantir, which integrate frontier AI into government platforms, will need to verify that their AI vendors have cleared the safety review hurdle before signing new federal contracts. Legal teams at prime contractors are already drafting AI vendor qualification clauses modeled on existing defense supply-chain security requirements. If the proposal advances to legislation, the safety review market could generate hundreds of millions in professional services revenue within three years, creating a new category of specialized compliance firms between the labs and the agencies they serve.

Downstream effects on hyperscalers, fabs, and enterprise buyers

The safety review proposal has second-order effects on the entire AI supply chain. If mandatory reviews become law, hyperscalers like Amazon, Google, and Microsoft will need to ensure that the AI models running on their cloud platforms for government customers have passed review. This drives demand for dedicated government cloud regions with enhanced compliance features. For chipmakers and fabs, the compute spending threshold of $100 million per year means that only the largest AI labs are subject to the rules, but those labs are precisely the ones buying the most GPUs and custom accelerators. Data center operators face a new variable: government contracts require models to be trained and deployed in facilities that meet specific security and audit standards. Enterprise buyers of AI services, from banks to healthcare systems, will need to verify that their vendors' models have passed review, adding a new layer of procurement due diligence. The proposal also creates an incentive for labs to diversify their hardware stack, since safety reviews scrutinize the security of specific chips or architectures.

Under the proposal, CAISI would transition from a voluntary advisory body to a permanent enforcement office with authority to conduct site visits at compute clusters and data centers. Labs would be required to disclose training runs, red-team findings, and safety testing results to government reviewers before a model is cleared for deployment on federal contracts. The group envisions a 60- to 90-day review cycle, which adds meaningful friction to the federal sales pipeline for labs operating on six-month release schedules. This timeline pressure creates an incentive to engage with CAISI proactively and submit documentation in parallel with training, rather than waiting until a model is finalized.

Policy signal for the AI market

The Americans for Responsible Innovation proposal is a clear signal that the voluntary era of AI safety is ending. By pushing for a permanent enforcement office within the government, the group is laying the groundwork for a regulatory framework that outlasts the current administration. The thresholds of $100 million in compute spending or $500 million in AI revenue are designed to capture the frontier labs while exempting smaller players, mirroring the tiered approach of the EU's AI Act. For investors, this reduces regulatory uncertainty by defining clear rules of the road. For labs, it creates a two-tier market: companies that pass review can bid on government contracts, while those that do not are locked out of a growing pool of federal AI spending. The proposal also implicitly endorses the idea that AI safety is a technical discipline with measurable outcomes, rather than a vague ethical principle. This accelerates the development of standardized safety benchmarks and testing methodologies, turning safety from a cost center into a competitive differentiator.

The proposal's framing around cyberattack and weapons development capabilities narrows the review scope to dual-use risk, not broad AI ethics. This matters for investors: the compliance burden is bounded and technically measurable, unlike open-ended ethical audits. Companies that build internal safety teams ahead of any mandate will be positioned to move faster through the review process and close government contracts sooner than competitors scrambling to retrofit compliance infrastructure. Digg's leaderboard adds a reputational dimension to this calculus, since a lab's public visibility in the AI discourse will increasingly signal its readiness to operate at the frontier of both technology and regulation.

The convergence of Digg's influencer-driven aggregation and the mandatory safety review proposal paints a picture of an AI industry that is simultaneously becoming more transparent and more regulated. Digg's leaderboard makes it easier to track which labs and personalities are driving the conversation, while the safety review regime forces those same labs to open their models to external scrutiny. For the next 12 to 18 months, the most important metric for AI companies is not benchmark scores or funding rounds, but whether they appear on Digg's top 100 list and whether they pass the government's safety review. The winners will be those that master both the attention economy and the compliance economy, turning public discourse and regulatory approval into durable competitive advantages.

The BossBlog Daily

Essential insights on AI, Finance, and Tech. Delivered every morning. No noise.

Unsubscribe anytime. No spam.

Tools mentioned

AffiliateSelected partner tools related to this topic.

AI Copilot Suite

Content drafting, summarization, and workflow automation.

Try AI Copilot →

AI Model Monitoring

Track model quality, latency, and drift with alerts.

View Monitoring Tool →

Some links above are affiliate links. We earn a commission if you sign up through them, at no extra cost to you. Affiliate revenue does not influence editorial coverage. See methodology.