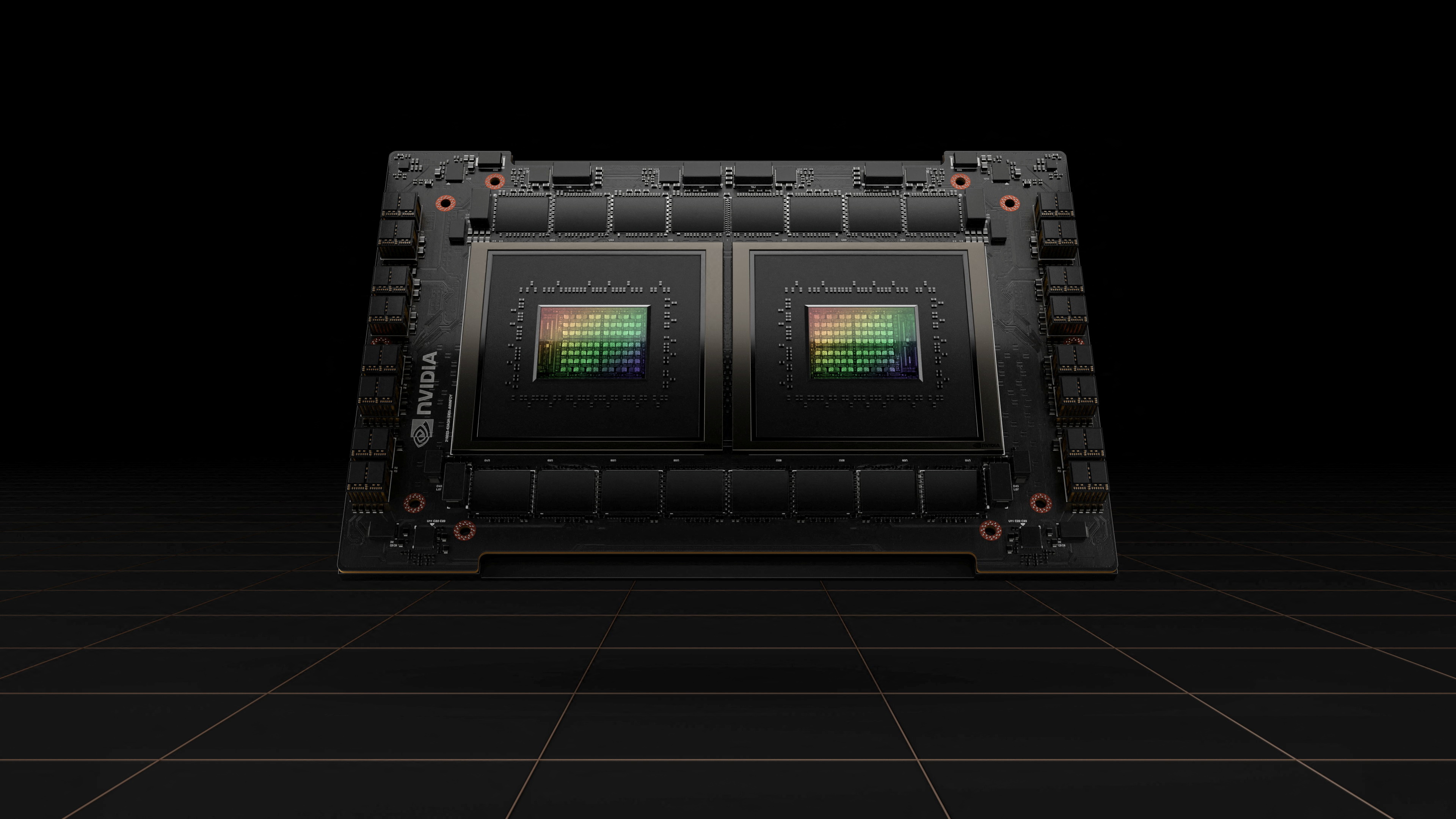

Nvidia’s latest move is not really about selling another chip. With NVLink Fusion, unveiled at GTC 2026 in March, the company is opening its high-speed interconnect so third-party CPUs and custom AI accelerators can plug into systems built around Nvidia’s rack-scale GPU fabric.

That shifts the competitive question from whether large customers can replace Nvidia to how much of their own silicon they can build without leaving Nvidia’s architecture. For hyperscalers, cloud groups and sovereign AI projects, that is a far more realistic path than a clean-sheet break.

From Proprietary Link to Infrastructure Layer

Nvidia said MediaTek, Marvell, Fujitsu and Qualcomm are among the companies that can tie non-Nvidia silicon into NVLink-based systems, while Cadence and Synopsys are helping support the design flow. In effect, Nvidia is extending the logic that made CUDA so dominant in AI software into the physical layout of the datacentre. Once compute, memory topology and networking are tuned around one fabric, switching costs rise well before application developers notice.

Why It Matters Over the Next Two Years

The next 12 to 24 months will be crucial because interconnect choices influence cluster design, collective communications libraries, deployment tooling and procurement roadmaps long before systems are fully rolled out. NVLink Fusion gives customers a semi-custom option: they can differentiate with in-house CPUs or accelerators while still relying on Nvidia’s assumptions for how AI infrastructure is wired together. That also raises the pressure on rival efforts such as UALink, because open standards often struggle once a single ecosystem gains enough operational gravity.

If CUDA became the software control point for modern AI, NVLink Fusion could become the hardware control point that keeps the rest of the industry building inside an Nvidia-shaped datacentre.

The BossBlog Daily

Essential insights on AI, Finance, and Tech. Delivered every morning. No noise.

Unsubscribe anytime. No spam.

Tools mentioned

AffiliateSelected partner tools related to this topic.

AI Copilot Suite

Content drafting, summarization, and workflow automation.

Try AI Copilot →

AI Model Monitoring

Track model quality, latency, and drift with alerts.

View Monitoring Tool →

Low-fee Global Broker

Multi-market access with transparent pricing.

Open Broker Account →

Some links above are affiliate links. We earn a commission if you sign up through them, at no extra cost to you. Affiliate revenue does not influence editorial coverage. See methodology.