OpenAI has disclosed to investors that the company has plans to develop 30 gigawatts of compute capacity by 2030, a figure that dramatically exceeds the 7 to 8 gigawatt target that Anthropic has communicated to its own investors. The disclosure highlights the intensifying infrastructure arms race among leading AI companies as they compete for the power generation resources necessary to train and deploy increasingly large artificial intelligence systems. The scale of compute capacity being discussed represents an unprecedented level of investment in AI infrastructure and raises questions about whether the energy demands of the AI industry can be sustainably met.

The 30 gigawatt figure is equivalent to the power consumption of approximately 25 million American homes, making it one of the largest planned additions to any nation's power grid in coming years. The challenge of delivering such quantities of electricity to data centers has prompted discussions about nuclear power, renewable energy installations, and novel approaches to power generation that were not previously considered necessary for technology infrastructure.

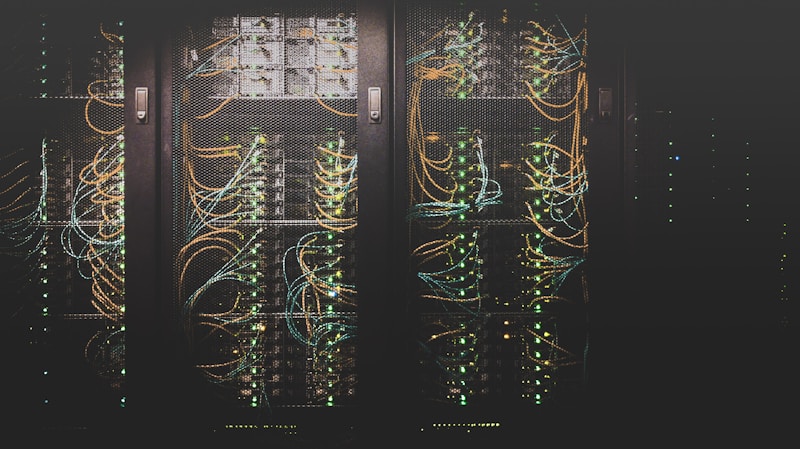

Infrastructure Scale

The infrastructure required to support 30 gigawatts of compute capacity represents a fundamental shift in how technology companies approach physical infrastructure development. Traditional data centers consume megawatts of power, with the largest facilities reaching into the hundreds of megawatts range. The jump to gigawatt-scale facilities would require new approaches to power delivery, cooling, and facility construction that have not been previously deployed at commercial scale.

The geographical challenges of delivering 30 gigawatts to data centers include the need for new transmission infrastructure, potential conflicts with existing power consumers, and the requirement for substantial investments in power generation capacity. The renewable energy projects being developed to supply AI data centers represent some of the largest renewable installations ever proposed, with some projects spanning tens of thousands of acres.

The timeline for developing 30 gigawatts of compute infrastructure by 2030 is extraordinarily compressed given the typical lead times for power generation and transmission projects. The 30GW planned by OpenAI dwarfs what any single data center could accommodate, requiring what would effectively be a nationwide network of computing facilities.

Anthropic's Position

Anthropic's more modest 7 to 8 gigawatt target reflects a different strategic approach to compute infrastructure development, potentially emphasizing efficient model architectures over raw compute scale. The company's position suggests that it believes meaningful AI capabilities can be achieved without matching the infrastructure investments that competitors are planning.

The disparity between the two companies' infrastructure targets raises questions about whether one approach will prove more viable than the other. Anthropic may be betting on algorithmic improvements and efficiency gains that reduce the compute required for advanced AI capabilities, while OpenAI is pursuing a strategy of overwhelming scale that prioritizes raw capacity.

The competitive dynamics between the companies could be affected by which approach proves more successful. If OpenAI's infrastructure investments translate into superior AI capabilities, the company may establish a decisive lead that competitors cannot easily match. If Anthropic's efficiency-focused approach delivers comparable results with less infrastructure, the company may achieve better returns on its investments.

Competitive Implications

The compute arms race has implications for the broader AI industry beyond the two leading companies. Startups and smaller companies that cannot match the infrastructure investments of the largest players may find themselves at a disadvantage in developing competitive AI systems. The capital requirements for competing at the frontier of AI development are increasing rapidly, potentially concentrating the industry among a small number of well-funded players.

The competitive pressure to develop massive compute infrastructure may also affect how AI companies approach partnerships with utilities, energy providers, and governments. The negotiations over power supply contracts and grid infrastructure investments are becoming increasingly important strategic decisions that could determine which companies can execute on their AI development plans.

The investment timelines for compute infrastructure create execution risks that could affect the competitive balance between OpenAI and Anthropic. If OpenAI encounters delays in developing its 30 gigawatt target, the company's competitive position could be affected even if Anthropic's smaller infrastructure expansion proceeds more smoothly.

Energy Market Effects

The competition among AI companies for power generation resources is beginning to affect energy markets in ways that extend beyond the technology sector. The massive renewable energy projects being developed to supply AI data centers are creating new demand for solar panels, wind turbines, and battery storage systems that competes with traditional energy consumers.

The interest in nuclear power for AI data centers reflects the challenge of meeting gigawatt-scale power requirements with renewable energy alone. Nuclear power provides consistent, baseload power generation that complements the intermittent nature of renewable energy sources, but nuclear projects face lengthy development timelines and significant regulatory hurdles.

The effect on electricity prices in regions where AI data centers are being developed could be substantial, potentially affecting residential and commercial consumers who compete for the same power generation resources. Some states have begun to consider how to allocate scarce transmission capacity between AI data centers and other consumers.

Investment Timeline

The 2030 timeline for reaching 30 gigawatts of compute capacity represents an extraordinarily aggressive development schedule given the typical lead times for power generation and data center construction projects. The investments required would need to be committed in the next few years to have any hope of meeting the 2030 target.

Investors in AI companies are being asked to fund infrastructure investments that rival the largest industrial projects in history, creating capital allocation challenges for companies that must balance infrastructure spending against research and development needs. The competition for capital may intensify as investors assess whether the infrastructure investments are necessary for competitive positioning or represent excessive spending.

The execution risks associated with developing gigawatt-scale compute infrastructure include regulatory approvals, construction timelines, technology development, and the availability of skilled labor to build and operate the facilities. Any significant delays could affect whether the promised compute capacity becomes available when needed for AI model training and deployment.

The BossBlog Daily

Essential insights on AI, Finance, and Tech. Delivered every morning. No noise.

Unsubscribe anytime. No spam.

Tools mentioned

AffiliateSelected partner tools related to this topic.

AI Copilot Suite

Content drafting, summarization, and workflow automation.

Try AI Copilot →

AI Model Monitoring

Track model quality, latency, and drift with alerts.

View Monitoring Tool →

Low-fee Global Broker

Multi-market access with transparent pricing.

Open Broker Account →

Some links above are affiliate links. We earn a commission if you sign up through them, at no extra cost to you. Affiliate revenue does not influence editorial coverage. See methodology.