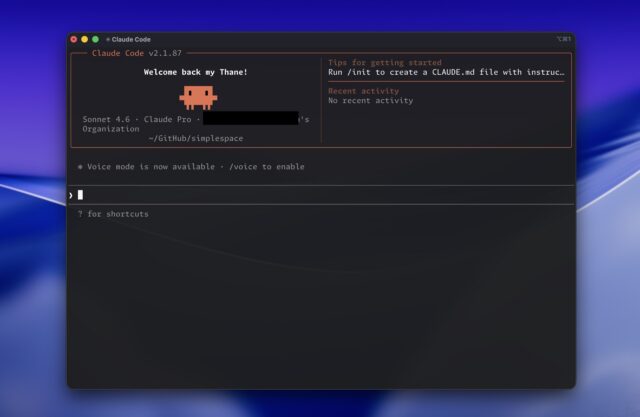

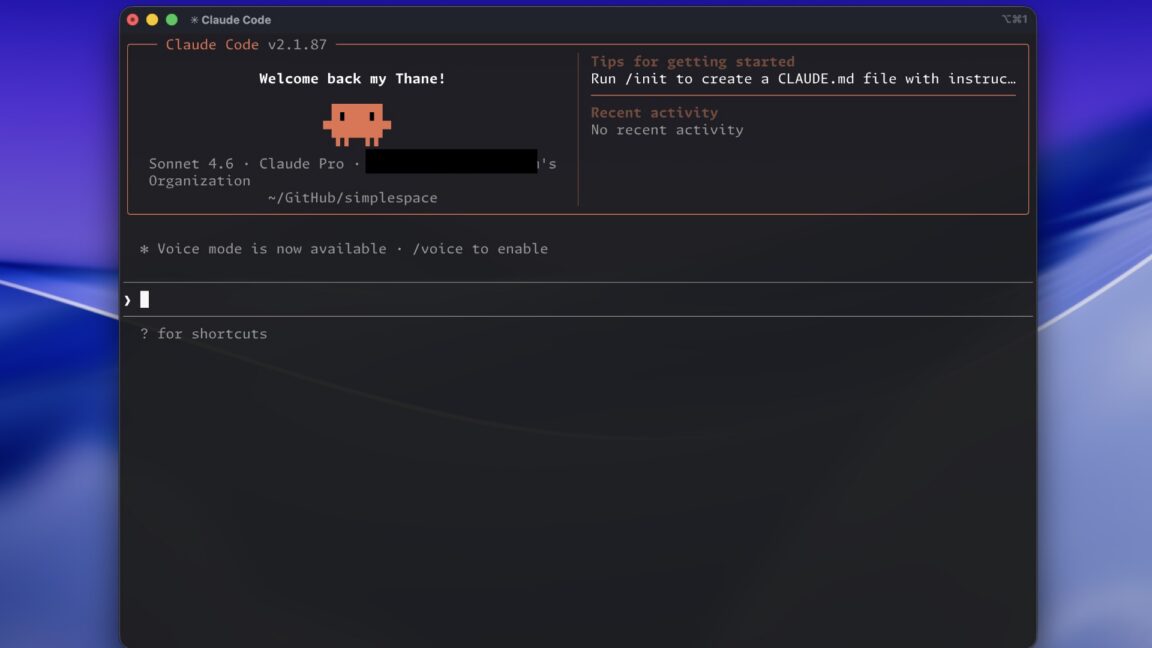

Anthropic has suffered a significant security incident, accidentally exposing the entire source code of its Claude Code command-line interface through an exposed map file in the npm package version 2.1.88. Nearly 2,000 TypeScript files and more than 512,000 lines of code were exposed before the leak was discovered and contained. The incident represents Anthropic's second major security lapse within days, following the exposure of details about its Mythos AI model.

The source code exposure means competitors can now study how Anthropic built its popular AI coding tool, potentially revealing proprietary techniques and architectural decisions. Importantly, model weights were not exposed in this incident, meaning the core AI capabilities remain protected.

The timing of the leak compounds Anthropic's recent difficulties, as the company had just notified government officials about security concerns regarding its Mythos model. The dual incidents raise questions about Anthropic's operational security practices and quality control procedures.

Anthropic has not disclosed how long the source code was exposed before the issue was identified and remediated, or whether any parties downloaded the source code during the vulnerable period.

Incident Details

The exposure occurred through a source map file that should not have been included in the published npm package. Source maps are development tools that map compiled code back to original source files, useful for debugging but not intended for production distributions.

Anthropic's engineering team apparently failed to exclude source maps when packaging Claude Code for npm distribution. This oversight allowed anyone who downloaded the package to access the complete development source code.

The Claude Code CLI has gained significant adoption among developers who use the tool to interact with Claude AI through terminal interfaces. The exposure reveals implementation details including API integration logic, prompt engineering, and response parsing functionality.

The 512,000 lines of exposed code encompass substantial portions of Claude Code's implementation.

Security Implications

The source code exposure reveals implementation details that Anthropic had presumably intended to keep proprietary. Architectural decisions, coding patterns, and technical approaches are now visible to competitors and security researchers.

Security researchers can analyze the exposed code for potential vulnerabilities in Claude Code's implementation. While model weights remain safe, implementation bugs could potentially be exploited.

The incident demonstrates that security encompasses more than protecting core AI assets. Supply chain security and code distribution practices require equally rigorous attention.

Competitive intelligence gathering from public source code has long been a tool used by technology companies. Anthropic's competitors can now validate their own approaches and potentially identify techniques worth adopting.

Competitive Impact

Companies developing AI coding assistants or competing products can now study Anthropic's implementation directly. The Claude Code source provides a detailed look at how a leading AI laboratory approaches developer tooling.

Specific implementation techniques for handling context windows, managing conversation state, and integrating with development environments are now visible. These details could inform competitive product development.

The open-source community has historically benefited from exposed code through learning and improvement of industry practices. However, Anthropic did not intentionally open-source Claude Code, making this exposure involuntary rather than deliberate sharing.

Whether competitors can meaningfully leverage the exposed code depends on how distinctive Anthropic's implementation proves to be.

Response and Remediation

Anthropic has removed the source map from the npm package and presumably conducted an internal review of how the exposure occurred. The company has not publicly detailed the timeline of discovery or specific remediation steps.

Notification to affected users and the broader security community represents best practice for such incidents. Transparency about the scope and duration of exposure helps the industry learn from the event.

NPM distributions with source maps exposed represent a common misconfiguration that affects many software projects. The Anthropic incident serves as a reminder for companies to audit their distribution packages.

Security audits of the exposed code by external researchers could provide valuable feedback. Responsible disclosure of any vulnerabilities discovered would help Anthropic improve Claude Code security.

Broader Security Culture

The second incident in days suggests Anthropic faces challenges in maintaining operational security as the company scales. The combination of the Mythos model exposure and source code leak indicates gaps in review processes.

Rapid company growth often creates security execution challenges as new team members and accelerated development cadences increase the probability of mistakes. Anthropic has grown substantially and may be experiencing growing pains.

Security culture requires ongoing attention and cannot be treated as a one-time implementation. Regular audits, automated checks, and security training help maintain vigilance against common mistakes.

The incident highlights how cloud-era development creates new exposure vectors that traditional software practices did not anticipate.

Industry Response

The AI development community has reacted with a mixture of concern and opportunity. Concern about the security practices of AI laboratories, and opportunity to study the implementation details from a leading AI company.

Security practitioners have used the incident to emphasize supply chain security importance. The npm ecosystem has experienced multiple incidents where malicious packages compromised downstream users, making legitimate exposures less severe but still significant.

AI industry analysts note that security practices have not kept pace with AI capability advancement. Labs racing to develop more powerful models sometimes prioritize speed over security rigor.

The incident may prompt industry-wide discussions about best practices for code distribution and security review.

Impact on Developer Trust

Claude Code has become an essential tool for many developers who rely on it for code completion, debugging, and learning. The source code exposure may affect how users perceive the tool's security and reliability.

Developers who have integrated Claude Code into their workflows may question whether other security exposures could affect their projects. Trust, once damaged, requires substantial effort to rebuild.

Enterprise customers evaluating Claude Code for team use may reconsider given the security track record. Corporate security teams conduct due diligence that now includes Anthropic's recent incident history.

Security researchers examining the exposed code have begun documenting their findings. Preliminary analysis suggests Anthropic uses standard practices for many implementation patterns while employing distinctive approaches for AI integration layers.

Future Precautions

Anthropic will likely implement additional checks to prevent similar exposures in the future. Automated scanning of packages before distribution, mandatory security reviews, and enhanced CI/CD controls represent common mitigations.

The company may engage third-party security firms to audit its distribution practices. External validation provides assurance that internal process improvements are effective.

Industry-wide, the incident may prompt npm and other package repositories to offer automated scanning for exposed source maps. Repository-level protections could prevent similar incidents across the software ecosystem.

The Anthropic case provides a template for how companies should respond to security incidents. Transparent communication, rapid remediation, and industry-wide learning represent positive outcomes possible from negative events.

The BossBlog Daily

Essential insights on AI, Finance, and Tech. Delivered every morning. No noise.

Unsubscribe anytime. No spam.

Tools mentioned

AffiliateSelected partner tools related to this topic.

AI Copilot Suite

Content drafting, summarization, and workflow automation.

Try AI Copilot →

AI Model Monitoring

Track model quality, latency, and drift with alerts.

View Monitoring Tool →

Low-fee Global Broker

Multi-market access with transparent pricing.

Open Broker Account →

Some links above are affiliate links. We earn a commission if you sign up through them, at no extra cost to you. Affiliate revenue does not influence editorial coverage. See methodology.